-

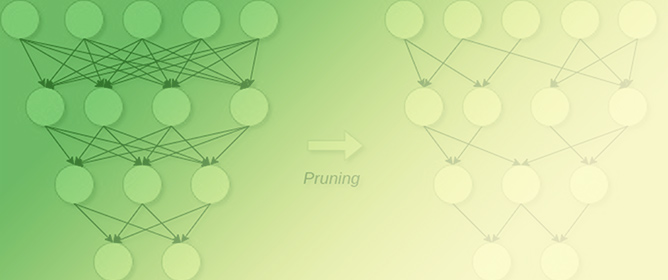

Activation-Based Pruning of Neural Networks

Activation-Based Pruning of Neural Networks -

A Biased-Randomized Discrete Event Algorithm to Improve the Productivity of Automated Storage and Retrieval Systems in the Steel Industry

A Biased-Randomized Discrete Event Algorithm to Improve the Productivity of Automated Storage and Retrieval Systems in the Steel Industry -

Enhancing Cryptocurrency Price Forecasting by Integrating Machine Learning with Social Media and Market Data

Enhancing Cryptocurrency Price Forecasting by Integrating Machine Learning with Social Media and Market Data -

Deep Learning-Based Visual Complexity Analysis of Electroencephalography Time-Frequency Images: Can It Localize the Epileptogenic Zone in the Brain?

Deep Learning-Based Visual Complexity Analysis of Electroencephalography Time-Frequency Images: Can It Localize the Epileptogenic Zone in the Brain?

Journal Description

Algorithms

Algorithms

is a peer-reviewed, open access journal which provides an advanced forum for studies related to algorithms and their applications. Algorithms is published monthly online by MDPI. The European Society for Fuzzy Logic and Technology (EUSFLAT) is affiliated with Algorithms and their members receive discounts on the article processing charges.

- Open Access — free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Ei Compendex, MathSciNet and other databases.

- Journal Rank: CiteScore - Q2 (Numerical Analysis)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 15 days after submission; acceptance to publication is undertaken in 2.9 days (median values for papers published in this journal in the second half of 2023).

- Testimonials: See what our editors and authors say about Algorithms.

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.3 (2022);

5-Year Impact Factor:

2.2 (2022)

Latest Articles

Advancing Pulmonary Nodule Diagnosis by Integrating Engineered and Deep Features Extracted from CT Scans

Algorithms 2024, 17(4), 161; https://doi.org/10.3390/a17040161 - 18 Apr 2024

Abstract

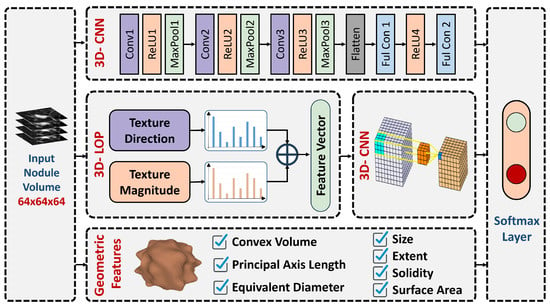

Enhancing lung cancer diagnosis requires precise early detection methods. This study introduces an automated diagnostic system leveraging computed tomography (CT) scans for early lung cancer identification. The main approach is the integration of three distinct feature analyses: the novel 3D-Local Octal Pattern (LOP)

[...] Read more.

Enhancing lung cancer diagnosis requires precise early detection methods. This study introduces an automated diagnostic system leveraging computed tomography (CT) scans for early lung cancer identification. The main approach is the integration of three distinct feature analyses: the novel 3D-Local Octal Pattern (LOP) descriptor for texture analysis, the 3D-Convolutional Neural Network (CNN) for extracting deep features, and geometric feature analysis to characterize pulmonary nodules. The 3D-LOP method innovatively captures nodule texture by analyzing the orientation and magnitude of voxel relationships, enabling the distinction of discriminative features. Simultaneously, the 3D-CNN extracts deep features from raw CT scans, providing comprehensive insights into nodule characteristics. Geometric features and assessing nodule shape further augment this analysis, offering a holistic view of potential malignancies. By amalgamating these analyses, our system employs a probability-based linear classifier to deliver a final diagnostic output. Validated on 822 Lung Image Database Consortium (LIDC) cases, the system’s performance was exceptional, with measures of

(This article belongs to the Special Issue Algorithms for Computer Aided Diagnosis)

►

Show Figures

Open AccessArticle

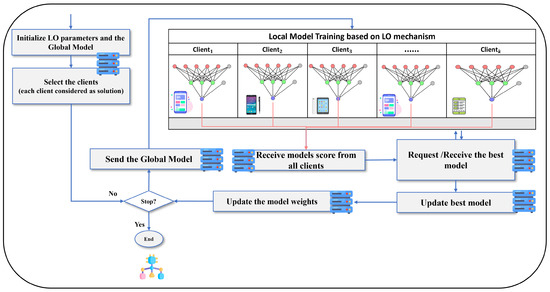

A Communication-Efficient Federated Learning Framework for Sustainable Development Using Lemurs Optimizer

by

Mohammed Azmi Al-Betar, Ammar Kamal Abasi, Zaid Abdi Alkareem Alyasseri, Salam Fraihat and Raghad Falih Mohammed

Algorithms 2024, 17(4), 160; https://doi.org/10.3390/a17040160 - 15 Apr 2024

Abstract

The pressing need for sustainable development solutions necessitates innovative data-driven tools. Machine learning (ML) offers significant potential, but faces challenges in centralized approaches, particularly concerning data privacy and resource constraints in geographically dispersed settings. Federated learning (FL) emerges as a transformative paradigm for

[...] Read more.

The pressing need for sustainable development solutions necessitates innovative data-driven tools. Machine learning (ML) offers significant potential, but faces challenges in centralized approaches, particularly concerning data privacy and resource constraints in geographically dispersed settings. Federated learning (FL) emerges as a transformative paradigm for sustainable development by decentralizing ML training to edge devices. However, communication bottlenecks hinder its scalability and sustainability. This paper introduces an innovative FL framework that enhances communication efficiency. The proposed framework addresses the communication bottleneck by harnessing the power of the Lemurs optimizer (LO), a nature-inspired metaheuristic algorithm. Inspired by the cooperative foraging behavior of lemurs, the LO strategically selects the most relevant model updates for communication, significantly reducing communication overhead. The framework was rigorously evaluated on CIFAR-10, MNIST, rice leaf disease, and waste recycling plant datasets representing various areas of sustainable development. Experimental results demonstrate that the proposed framework reduces communication overhead by over 15% on average compared to baseline FL approaches, while maintaining high model accuracy. This breakthrough extends the applicability of FL to resource-constrained environments, paving the way for more scalable and sustainable solutions for real-world initiatives.

Full article

(This article belongs to the Section Algorithms for Multidisciplinary Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

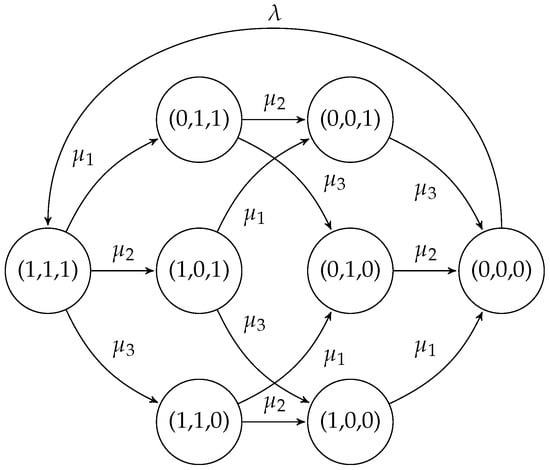

Efficient Algorithm for Proportional Lumpability and Its Application to Selfish Mining in Public Blockchains

by

Carla Piazza, Sabina Rossi and Daria Smuseva

Algorithms 2024, 17(4), 159; https://doi.org/10.3390/a17040159 - 15 Apr 2024

Abstract

This paper explores the concept of proportional lumpability as an extension of the original definition of lumpability, addressing the challenges posed by the state space explosion problem in computing performance indices for large stochastic models. Lumpability traditionally relies on state aggregation techniques and

[...] Read more.

This paper explores the concept of proportional lumpability as an extension of the original definition of lumpability, addressing the challenges posed by the state space explosion problem in computing performance indices for large stochastic models. Lumpability traditionally relies on state aggregation techniques and is applicable to Markov chains demonstrating structural regularity. Proportional lumpability extends this idea, proposing that the transition rates of a Markov chain can be modified by certain factors, resulting in a lumpable new Markov chain. This concept facilitates the derivation of precise performance indices for the original process. This paper establishes the well-defined nature of the problem of computing the coarsest proportional lumpability that refines a given initial partition, ensuring a unique solution exists. Additionally, a polynomial time algorithm is introduced to solve this problem, offering valuable insights into both the concept of proportional lumpability and the broader realm of partition refinement techniques. The effectiveness of proportional lumpability is demonstrated through a case study that consists of designing a model to investigate selfish mining behaviors on public blockchains. This research contributes to a better understanding of efficient approaches for handling large stochastic models and highlights the practical applicability of proportional lumpability in deriving exact performance indices.

Full article

(This article belongs to the Special Issue 2024 and 2025 Selected Papers from Algorithms Editorial Board Members)

►▼

Show Figures

Figure 1

Open AccessArticle

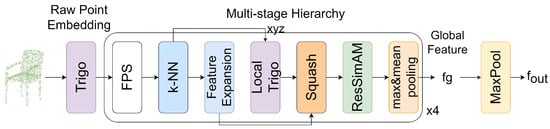

Point-Sim: A Lightweight Network for 3D Point Cloud Classification

by

Jiachen Guo and Wenjie Luo

Algorithms 2024, 17(4), 158; https://doi.org/10.3390/a17040158 - 15 Apr 2024

Abstract

Analyzing point clouds with neural networks is a current research hotspot. In order to analyze the 3D geometric features of point clouds, most neural networks improve the network performance by adding local geometric operators and trainable parameters. However, deep learning usually requires a

[...] Read more.

Analyzing point clouds with neural networks is a current research hotspot. In order to analyze the 3D geometric features of point clouds, most neural networks improve the network performance by adding local geometric operators and trainable parameters. However, deep learning usually requires a large amount of computational resources for training and inference, which poses challenges to hardware devices and energy consumption. Therefore, some researches have started to try to use a nonparametric approach to extract features. Point-NN combines nonparametric modules to build a nonparametric network for 3D point cloud analysis, and the nonparametric components include operations such as trigonometric embedding, farthest point sampling (FPS), k-nearest neighbor (k-NN), and pooling. However, Point-NN has some blindness in feature embedding using the trigonometric function during feature extraction. To eliminate this blindness as much as possible, we utilize a nonparametric energy function-based attention mechanism (ResSimAM). The embedded features are enhanced by calculating the energy of the features by the energy function, and then the ResSimAM is used to enhance the weights of the embedded features by the energy to enhance the features without adding any parameters to the original network; Point-NN needs to compute the similarity between each feature at the naive feature similarity matching stage; however, the magnitude difference of the features in vector space during the feature extraction stage may affect the final matching result. We use the Squash operation to squeeze the features. This nonlinear operation can make the features squeeze to a certain range without changing the original direction in the vector space, thus eliminating the effect of feature magnitude, and we can ultimately better complete the naive feature matching in the vector space. We inserted these modules into the network and build a nonparametric network, Point-Sim, which performs well in 3D classification tasks. Based on this, we extend the lightweight neural network Point-SimP by adding some trainable parameters for the point cloud classification task, which requires only 0.8 M parameters for high performance analysis. Experimental results demonstrate the effectiveness of our proposed algorithm in the point cloud shape classification task. The corresponding results on ModelNet40 and ScanObjectNN are 83.9% and 66.3% for 0 M parameters—without any training—and 93.3% and 86.6% for 0.8 M parameters. The Point-SimP reaches a test speed of 962 samples per second on the ModelNet40 dataset. The experimental results show that our proposed method effectively improves the performance on point cloud classification networks.

Full article

(This article belongs to the Special Issue Machine Learning for Pattern Recognition)

►▼

Show Figures

Figure 1

Open AccessSystematic Review

Prime Number Sieving—A Systematic Review with Performance Analysis

by

Mircea Ghidarcea and Decebal Popescu

Algorithms 2024, 17(4), 157; https://doi.org/10.3390/a17040157 - 14 Apr 2024

Abstract

►▼

Show Figures

The systematic generation of prime numbers has been almost ignored since the 1990s, when most of the IT research resources related to prime numbers migrated to studies on the use of very large primes for cryptography, and little effort was made to further

[...] Read more.

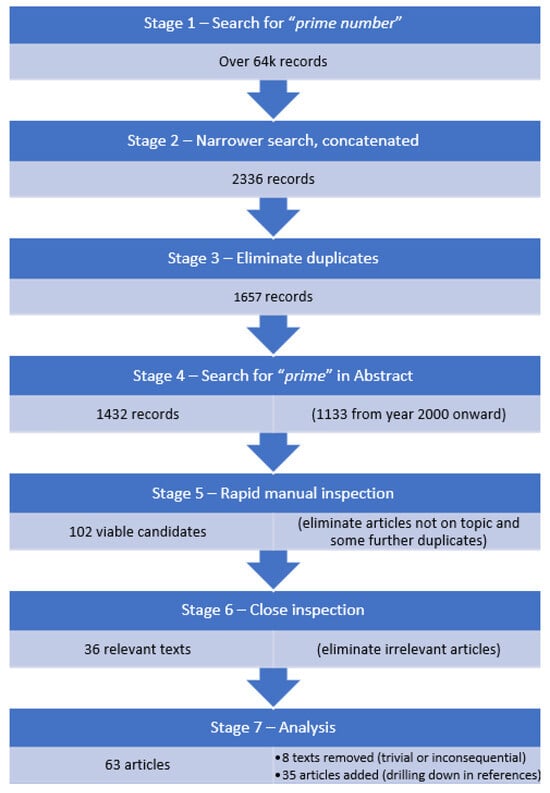

The systematic generation of prime numbers has been almost ignored since the 1990s, when most of the IT research resources related to prime numbers migrated to studies on the use of very large primes for cryptography, and little effort was made to further the knowledge regarding techniques like sieving. At present, sieving techniques are mostly used for didactic purposes, and no real advances seem to be made in this domain. This systematic review analyzes the theoretical advances in sieving that have occurred up to the present. The research followed the PRISMA 2020 guidelines and was conducted using three established databases: Web of Science, IEEE Xplore and Scopus. Our methodical review aims to provide an extensive overview of the progress in prime sieving—unfortunately, no significant advancements in this field were identified in the last 20 years.

Full article

Figure 1

Open AccessArticle

Spike-Weighted Spiking Neural Network with Spiking Long Short-Term Memory: A Biomimetic Approach to Decoding Brain Signals

by

Kyle McMillan, Rosa Qiyue So, Camilo Libedinsky, Kai Keng Ang and Brian Premchand

Algorithms 2024, 17(4), 156; https://doi.org/10.3390/a17040156 - 12 Apr 2024

Abstract

Background. Brain–machine interfaces (BMIs) offer users the ability to directly communicate with digital devices through neural signals decoded with machine learning (ML)-based algorithms. Spiking Neural Networks (SNNs) are a type of Artificial Neural Network (ANN) that operate on neural spikes instead of continuous

[...] Read more.

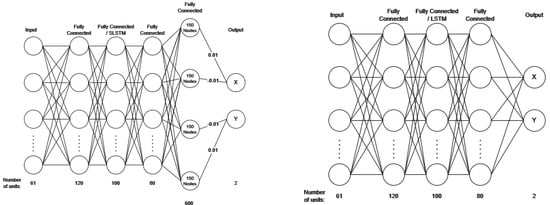

Background. Brain–machine interfaces (BMIs) offer users the ability to directly communicate with digital devices through neural signals decoded with machine learning (ML)-based algorithms. Spiking Neural Networks (SNNs) are a type of Artificial Neural Network (ANN) that operate on neural spikes instead of continuous scalar outputs. Compared to traditional ANNs, SNNs perform fewer computations, use less memory, and mimic biological neurons better. However, SNNs only retain information for short durations, limiting their ability to capture long-term dependencies in time-variant data. Here, we propose a novel spike-weighted SNN with spiking long short-term memory (swSNN-SLSTM) for a regression problem. Spike-weighting captures neuronal firing rate instead of membrane potential, and the SLSTM layer captures long-term dependencies. Methods. We compared the performance of various ML algorithms during decoding directional movements, using a dataset of microelectrode recordings from a macaque during a directional joystick task, and also an open-source dataset. We thus quantified how swSNN-SLSTM performed compared to existing ML models: an unscented Kalman filter, LSTM-based ANN, and membrane-based SNN techniques. Result. The proposed swSNN-SLSTM outperforms both the unscented Kalman filter, the LSTM-based ANN, and the membrane based SNN technique. This shows that incorporating SLSTM can better capture long-term dependencies within neural data. Also, our proposed swSNN-SLSTM algorithm shows promise in reducing power consumption and lowering heat dissipation in implanted BMIs.

Full article

(This article belongs to the Special Issue Machine Learning in Medical Signal and Image Processing (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

Impacting Robustness in Deep Learning-Based NIDS through Poisoning Attacks

by

Shahad Alahmed, Qutaiba Alasad, Jiann-Shiun Yuan and Mohammed Alawad

Algorithms 2024, 17(4), 155; https://doi.org/10.3390/a17040155 - 11 Apr 2024

Abstract

The rapid expansion and pervasive reach of the internet in recent years have raised concerns about evolving and adaptable online threats, particularly with the extensive integration of Machine Learning (ML) systems into our daily routines. These systems are increasingly becoming targets of malicious

[...] Read more.

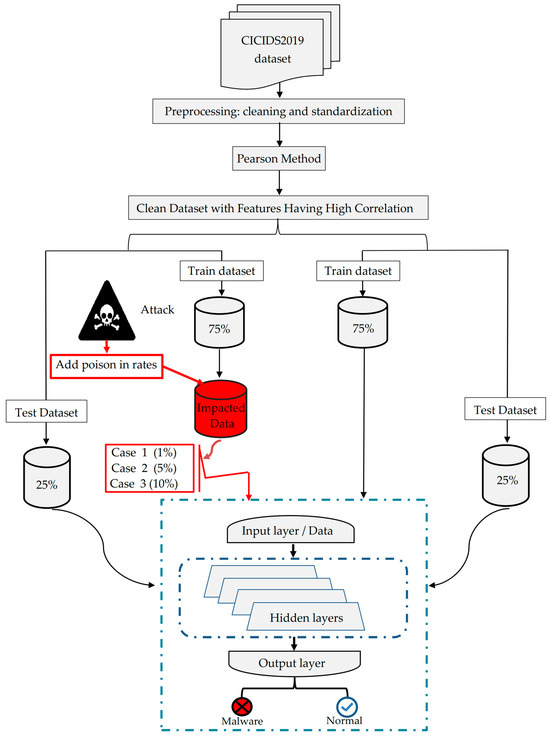

The rapid expansion and pervasive reach of the internet in recent years have raised concerns about evolving and adaptable online threats, particularly with the extensive integration of Machine Learning (ML) systems into our daily routines. These systems are increasingly becoming targets of malicious attacks that seek to distort their functionality through the concept of poisoning. Such attacks aim to warp the intended operations of these services, deviating them from their true purpose. Poisoning renders systems susceptible to unauthorized access, enabling illicit users to masquerade as legitimate ones, compromising the integrity of smart technology-based systems like Network Intrusion Detection Systems (NIDSs). Therefore, it is necessary to continue working on studying the resilience of deep learning network systems while there are poisoning attacks, specifically interfering with the integrity of data conveyed over networks. This paper explores the resilience of deep learning (DL)—based NIDSs against untethered white-box attacks. More specifically, it introduces a designed poisoning attack technique geared especially for deep learning by adding various amounts of altered instances into training datasets at diverse rates and then investigating the attack’s influence on model performance. We observe that increasing injection rates (from 1% to 50%) and random amplified distribution have slightly affected the overall performance of the system, which is represented by accuracy (0.93) at the end of the experiments. However, the rest of the results related to the other measures, such as PPV (0.082), FPR (0.29), and MSE (0.67), indicate that the data manipulation poisoning attacks impact the deep learning model. These findings shed light on the vulnerability of DL-based NIDS under poisoning attacks, emphasizing the significance of securing such systems against these sophisticated threats, for which defense techniques should be considered. Our analysis, supported by experimental results, shows that the generated poisoned data have significantly impacted the model performance and are hard to be detected.

Full article

(This article belongs to the Special Issue Using Artificial Intelligence to Improve Security in the Software Development Cycle: Techniques, Challenges and Opportunities)

►▼

Show Figures

Figure 1

Open AccessArticle

Hybrid Newton-like Inverse Free Algorithms for Solving Nonlinear Equations

by

Ioannis K. Argyros, Santhosh George, Samundra Regmi and Christopher I. Argyros

Algorithms 2024, 17(4), 154; https://doi.org/10.3390/a17040154 - 10 Apr 2024

Abstract

Iterative algorithms requiring the computationally expensive in general inversion of linear operators are difficult to implement. This is the reason why hybrid Newton-like algorithms without inverses are developed in this paper to solve Banach space-valued nonlinear equations. The inverses of the linear operator

[...] Read more.

Iterative algorithms requiring the computationally expensive in general inversion of linear operators are difficult to implement. This is the reason why hybrid Newton-like algorithms without inverses are developed in this paper to solve Banach space-valued nonlinear equations. The inverses of the linear operator are exchanged by a finite sum of fixed linear operators. Two types of convergence analysis are presented for these algorithms: the semilocal and the local. The Fréchet derivative of the operator on the equation is controlled by a majorant function. The semi-local analysis also relies on majorizing sequences. The celebrated contraction mapping principle is utilized to study the convergence of the Krasnoselskij-like algorithm. The numerical experimentation demonstrates that the new algorithms are essentially as effective but less expensive to implement. Although the new approach is demonstrated for Newton-like algorithms, it can be applied to other single-step, multistep, or multipoint algorithms using inverses of linear operators along the same lines.

Full article

(This article belongs to the Special Issue Numerical Optimization and Algorithms: 2nd Edition)

Open AccessArticle

Smooth Information Criterion for Regularized Estimation of Item Response Models

by

Alexander Robitzsch

Algorithms 2024, 17(4), 153; https://doi.org/10.3390/a17040153 - 06 Apr 2024

Abstract

Item response theory (IRT) models are frequently used to analyze multivariate categorical data from questionnaires or cognitive test data. In order to reduce the model complexity in item response models, regularized estimation is now widely applied, adding a nondifferentiable penalty function like the

[...] Read more.

Item response theory (IRT) models are frequently used to analyze multivariate categorical data from questionnaires or cognitive test data. In order to reduce the model complexity in item response models, regularized estimation is now widely applied, adding a nondifferentiable penalty function like the LASSO or the SCAD penalty to the log-likelihood function in the optimization function. In most applications, regularized estimation repeatedly estimates the IRT model on a grid of regularization parameters

(This article belongs to the Special Issue Supervised and Unsupervised Classification Algorithms (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

Resource Allocation of Cooperative Alternatives Using the Analytic Hierarchy Process and Analytic Network Process with Shapley Values

by

Jih-Jeng Huang and Chin-Yi Chen

Algorithms 2024, 17(4), 152; https://doi.org/10.3390/a17040152 - 05 Apr 2024

Abstract

Cooperative alternatives need complex multi-criteria decision-making (MCDM) consideration, especially in resource allocation, where the alternatives exhibit interdependent relationships. Traditional MCDM methods like the Analytic Hierarchy Process (AHP) and Analytic Network Process (ANP) often overlook the synergistic potential of cooperative alternatives. This study introduces

[...] Read more.

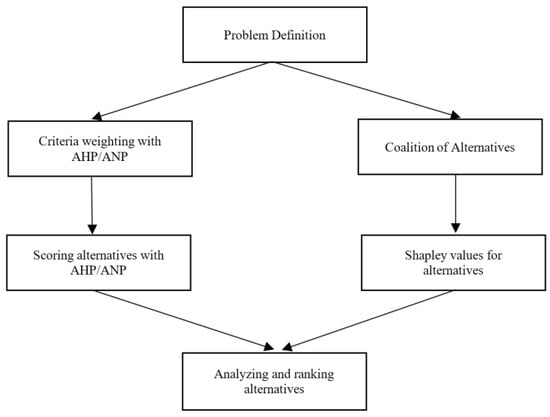

Cooperative alternatives need complex multi-criteria decision-making (MCDM) consideration, especially in resource allocation, where the alternatives exhibit interdependent relationships. Traditional MCDM methods like the Analytic Hierarchy Process (AHP) and Analytic Network Process (ANP) often overlook the synergistic potential of cooperative alternatives. This study introduces a novel method integrating AHP/ANP with Shapley values, specifically designed to address this gap by evaluating alternatives on individual merits and their contributions within coalitions. Our methodology begins with defining problem structures and applying AHP/ANP to determine the criteria weights and alternatives’ scores. Subsequently, we compute Shapley values based on coalition values, synthesizing these findings to inform resource allocation decisions more equitably. A numerical example of budget allocation illustrates the method’s efficacy, revealing significant insights into resource distribution when cooperative dynamics are considered. Our results demonstrate the proposed method’s superiority in capturing the nuanced interplay between criteria and alternatives, leading to more informed urban planning decisions. This approach marks a significant advancement in MCDM, offering a comprehensive framework that incorporates both the analytical rigor of AHP/ANP and the equitable considerations of cooperative game theory through Shapley values.

Full article

(This article belongs to the Collection Feature Papers in Algorithms for Multidisciplinary Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

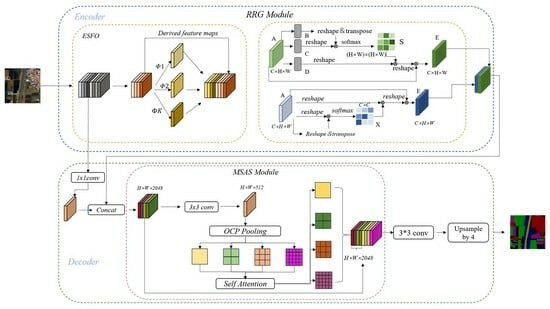

Research on Efficient Feature Generation and Spatial Aggregation for Remote Sensing Semantic Segmentation

by

Ruoyang Li, Shuping Xiong, Yinchao Che, Lei Shi, Xinming Ma and Lei Xi

Algorithms 2024, 17(4), 151; https://doi.org/10.3390/a17040151 - 04 Apr 2024

Abstract

Semantic segmentation algorithms leveraging deep convolutional neural networks often encounter challenges due to their extensive parameters, high computational complexity, and slow execution. To address these issues, we introduce a semantic segmentation network model emphasizing the rapid generation of redundant features and multi-level spatial

[...] Read more.

Semantic segmentation algorithms leveraging deep convolutional neural networks often encounter challenges due to their extensive parameters, high computational complexity, and slow execution. To address these issues, we introduce a semantic segmentation network model emphasizing the rapid generation of redundant features and multi-level spatial aggregation. This model applies cost-efficient linear transformations instead of standard convolution operations during feature map generation, effectively managing memory usage and reducing computational complexity. To enhance the feature maps’ representation ability post-linear transformation, a specifically designed dual-attention mechanism is implemented, enhancing the model’s capacity for semantic understanding of both local and global image information. Moreover, the model integrates sparse self-attention with multi-scale contextual strategies, effectively combining features across different scales and spatial extents. This approach optimizes computational efficiency and retains crucial information, enabling precise and quick image segmentation. To assess the model’s segmentation performance, we conducted experiments in Changge City, Henan Province, using datasets such as LoveDA, PASCAL VOC, LandCoverNet, and DroneDeploy. These experiments demonstrated the model’s outstanding performance on public remote sensing datasets, significantly reducing the parameter count and computational complexity while maintaining high accuracy in segmentation tasks. This advancement offers substantial technical benefits for applications in agriculture and forestry, including land cover classification and crop health monitoring, thereby underscoring the model’s potential to support these critical sectors effectively.

Full article

(This article belongs to the Special Issue Algorithms in Data Classification (2nd Edition))

►▼

Show Figures

Graphical abstract

Open AccessArticle

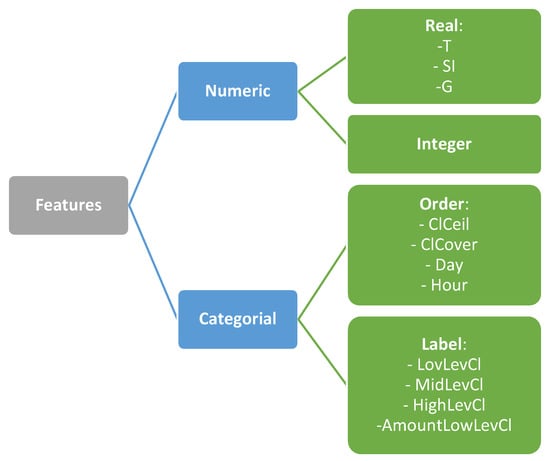

Solar Irradiance Forecasting with Natural Language Processing of Cloud Observations and Interpretation of Results with Modified Shapley Additive Explanations

by

Pavel V. Matrenin, Valeriy V. Gamaley, Alexandra I. Khalyasmaa and Alina I. Stepanova

Algorithms 2024, 17(4), 150; https://doi.org/10.3390/a17040150 - 02 Apr 2024

Abstract

Forecasting the generation of solar power plants (SPPs) requires taking into account meteorological parameters that influence the difference between the solar irradiance at the top of the atmosphere calculated with high accuracy and the solar irradiance at the tilted plane of the solar

[...] Read more.

Forecasting the generation of solar power plants (SPPs) requires taking into account meteorological parameters that influence the difference between the solar irradiance at the top of the atmosphere calculated with high accuracy and the solar irradiance at the tilted plane of the solar panel on the Earth’s surface. One of the key factors is cloudiness, which can be presented not only as a percentage of the sky area covered by clouds but also many additional parameters, such as the type of clouds, the distribution of clouds across atmospheric layers, and their height. The use of machine learning algorithms to forecast the generation of solar power plants requires retrospective data over a long period and formalising the features; however, retrospective data with detailed information about cloudiness are normally recorded in the natural language format. This paper proposes an algorithm for processing such records to convert them into a binary feature vector. Experiments conducted on data from a real solar power plant showed that this algorithm increases the accuracy of short-term solar irradiance forecasts by 5–15%, depending on the quality metric used. At the same time, adding features makes the model less transparent to the user, which is a significant drawback from the point of view of explainable artificial intelligence. Therefore, the paper uses an additive explanation algorithm based on the Shapley vector to interpret the model’s output. It is shown that this approach allows the machine learning model to explain why it generates a particular forecast, which will provide a greater level of trust in intelligent information systems in the power industry.

Full article

(This article belongs to the Special Issue Artificial Intelligence Algorithms in Sustainability)

►▼

Show Figures

Figure 1

Open AccessArticle

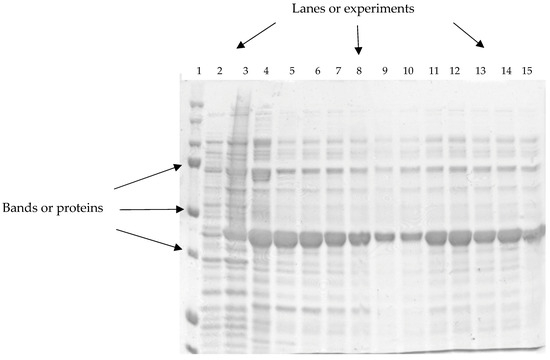

A New Algorithm for Detecting GPN Protein Expression and Overexpression of IDC and ILC Her2+ Subtypes on Polyacrylamide Gels Associated with Breast Cancer

by

Jorge Juarez-Lucero, Maria Guevara-Villa, Anabel Sanchez-Sanchez, Raquel Diaz-Hernandez and Leopoldo Altamirano-Robles

Algorithms 2024, 17(4), 149; https://doi.org/10.3390/a17040149 - 02 Apr 2024

Abstract

Sodium dodecyl sulfate–polyacrylamide gel electrophoresis (SDS-PAGE) is used to identify protein presence, absence, or overexpression and usually, their interpretation is visual. Some published methods can localize the position of proteins using image analysis on images of SDS-PAGE gels. However, they cannot automatically determine

[...] Read more.

Sodium dodecyl sulfate–polyacrylamide gel electrophoresis (SDS-PAGE) is used to identify protein presence, absence, or overexpression and usually, their interpretation is visual. Some published methods can localize the position of proteins using image analysis on images of SDS-PAGE gels. However, they cannot automatically determine a particular protein band’s concentration or molecular weight. In this article, a new methodology to identify the number of samples present in an SDS-PAGE gel and the molecular weight of the recombinant protein is developed. SDS-PAGE images of different concentrations of pure GPN protein were created to produce homogeneous gels. Then, these images were analyzed using the developed methodology called Image Profile Based on Binarized Image Segmentation (IPBBIS). It is based on detecting the maximum intensity values of the analyzed bands and produces the segmentation of images filtered by a binary mask. The IPBBIS was developed to identify the number of samples in an SDS-PAGE gel and the molecular weight of the recombinant protein of interest, with a margin of error of 3.35%. An accuracy of 0.9850521 was achieved for homogeneous gels and 0.91736 for heterogeneous gels of low quality.

Full article

(This article belongs to the Special Issue Machine Learning for Pattern Recognition)

►▼

Show Figures

Figure 1

Open AccessArticle

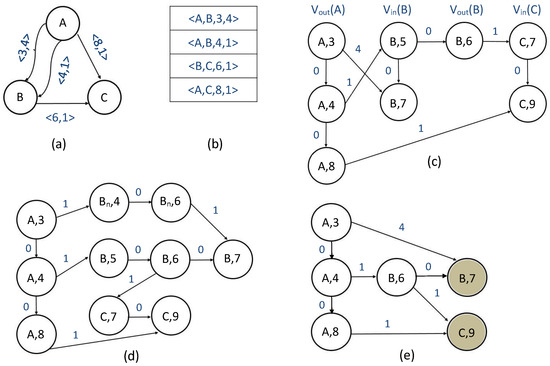

Path Algorithms for Contact Sequence Temporal Graphs

by

Sanaz Gheibi, Tania Banerjee, Sanjay Ranka and Sartaj Sahni

Algorithms 2024, 17(4), 148; https://doi.org/10.3390/a17040148 - 30 Mar 2024

Abstract

This paper proposes a new time-respecting graph (TRG) representation for contact sequence temporal graphs. Our representation is more memory-efficient than previously proposed representations and has run-time advantages over the ordered sequence of edges (OSE) representation, which is faster than other known representations. While

[...] Read more.

This paper proposes a new time-respecting graph (TRG) representation for contact sequence temporal graphs. Our representation is more memory-efficient than previously proposed representations and has run-time advantages over the ordered sequence of edges (OSE) representation, which is faster than other known representations. While our proposed representation clearly outperforms the OSE representation for shallow neighborhood search problems, it is not evident that it does so for different problems. We demonstrate the competitiveness of our TRG representation for the single-source all-destinations fastest, min-hop, shortest, and foremost paths problems.

Full article

(This article belongs to the Collection Feature Papers in Combinatorial Optimization, Graph, and Network Algorithms)

►▼

Show Figures

Figure 1

Open AccessArticle

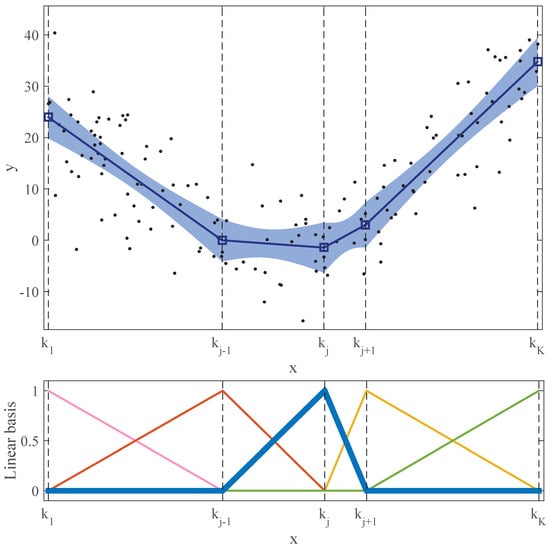

A Piecewise Linear Regression Model Ensemble for Large-Scale Curve Fitting

by

Santiago Moreno-Carbonell and Eugenio F. Sánchez-Úbeda

Algorithms 2024, 17(4), 147; https://doi.org/10.3390/a17040147 - 30 Mar 2024

Abstract

The Linear Hinges Model (LHM) is an efficient approach to flexible and robust one-dimensional curve fitting under stringent high-noise conditions. However, it was initially designed to run in a single-core processor, accessing the whole input dataset. The surge in data volumes, coupled with

[...] Read more.

The Linear Hinges Model (LHM) is an efficient approach to flexible and robust one-dimensional curve fitting under stringent high-noise conditions. However, it was initially designed to run in a single-core processor, accessing the whole input dataset. The surge in data volumes, coupled with the increase in parallel hardware architectures and specialised frameworks, has led to a growth in interest and a need for new algorithms able to deal with large-scale datasets and techniques to adapt traditional machine learning algorithms to this new paradigm. This paper presents several ensemble alternatives, based on model selection and combination, that allow for obtaining a continuous piecewise linear regression model from large-scale datasets using the learning algorithm of the LHM. Our empirical tests have proved that model combination outperforms model selection and that these methods can provide better results in terms of bias, variance, and execution time than the original algorithm executed over the entire dataset.

Full article

(This article belongs to the Special Issue Machine Learning Algorithms for Big Data Analysis (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

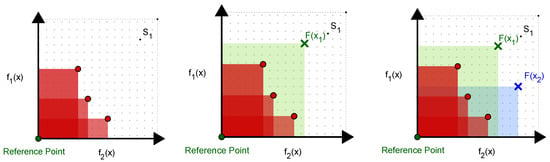

Multi-Objective BiLevel Optimization by Bayesian Optimization

by

Vedat Dogan and Steven Prestwich

Algorithms 2024, 17(4), 146; https://doi.org/10.3390/a17040146 - 30 Mar 2024

Abstract

In a multi-objective optimization problem, a decision maker has more than one objective to optimize. In a bilevel optimization problem, there are the following two decision-makers in a hierarchy: a leader who makes the first decision and a follower who reacts, each aiming

[...] Read more.

In a multi-objective optimization problem, a decision maker has more than one objective to optimize. In a bilevel optimization problem, there are the following two decision-makers in a hierarchy: a leader who makes the first decision and a follower who reacts, each aiming to optimize their own objective. Many real-world decision-making processes have various objectives to optimize at the same time while considering how the decision-makers affect each other. When both features are combined, we have a multi-objective bilevel optimization problem, which arises in manufacturing, logistics, environmental economics, defence applications and many other areas. Many exact and approximation-based techniques have been proposed, but because of the intrinsic nonconvexity and conflicting multiple objectives, their computational cost is high. We propose a hybrid algorithm based on batch Bayesian optimization to approximate the upper-level Pareto-optimal solution set. We also extend our approach to handle uncertainty in the leader’s objectives via a hypervolume improvement-based acquisition function. Experiments show that our algorithm is more efficient than other current methods while successfully approximating Pareto-fronts.

Full article

(This article belongs to the Special Issue Recent Advances in Multi-Objective Algorithms and Optimization 2023–2024)

►▼

Show Figures

Figure 1

Open AccessArticle

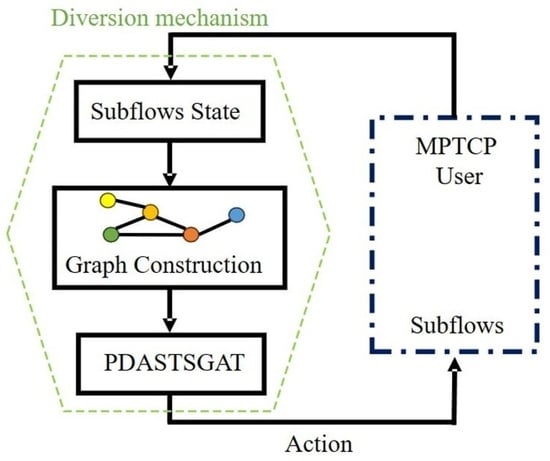

PDASTSGAT: An STSGAT-Based Multipath Data Scheduling Algorithm

by

Sen Xue, Chengyu Wu, Jing Han and Ao Zhan

Algorithms 2024, 17(4), 145; https://doi.org/10.3390/a17040145 - 30 Mar 2024

Abstract

How to select the transmitting path in MPTCP scheduling is an important but open problem. This paper proposes an intelligent data scheduling algorithm using spatiotemporal synchronous graph attention neural networks to improve MPTCP scheduling. By exploiting the spatiotemporal correlations in the data transmission

[...] Read more.

How to select the transmitting path in MPTCP scheduling is an important but open problem. This paper proposes an intelligent data scheduling algorithm using spatiotemporal synchronous graph attention neural networks to improve MPTCP scheduling. By exploiting the spatiotemporal correlations in the data transmission process and incorporating graph self-attention mechanisms, the algorithm can quickly select the optimal transmission path and ensure fairness among similar links. Through simulations in NS3, the algorithm achieves a throughput gain of 7.9% compared to the PDAA3C algorithm and demonstrates improved packet transmission performance.

Full article

(This article belongs to the Special Issue Algorithms for Network Analysis: Theory and Practice)

►▼

Show Figures

Graphical abstract

Open AccessArticle

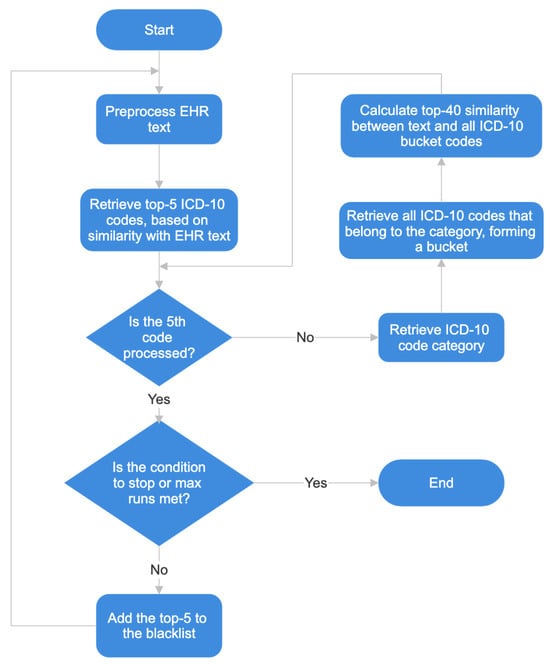

Aiding ICD-10 Encoding of Clinical Health Records Using Improved Text Cosine Similarity and PLM-ICD

by

Hugo Silva, Vítor Duque, Mário Macedo and Mateus Mendes

Algorithms 2024, 17(4), 144; https://doi.org/10.3390/a17040144 - 29 Mar 2024

Abstract

The International Classification of Diseases, 10th edition (ICD-10), has been widely used for the classification of patient diagnostic information. This classification is usually performed by dedicated physicians with specific coding training, and it is a laborious task. Automatic classification is a challenging task

[...] Read more.

The International Classification of Diseases, 10th edition (ICD-10), has been widely used for the classification of patient diagnostic information. This classification is usually performed by dedicated physicians with specific coding training, and it is a laborious task. Automatic classification is a challenging task for the domain of natural language processing. Therefore, automatic methods have been proposed to aid the classification process. This paper proposes a method where Cosine text similarity is combined with a pretrained language model, PLM-ICD, in order to increase the number of probably useful suggestions of ICD-10 codes, based on the Medical Information Mart for Intensive Care (MIMIC)-IV dataset. The results show that a strategy of using multiple runs, and bucket category search, in the Cosine method, improves the results, providing more useful suggestions. Also, the use of a strategy composed by the Cosine method and PLM-ICD, which was called PLM-ICD-C, provides better results than just the PLM-ICD.

Full article

(This article belongs to the Special Issue Machine Learning Algorithms and Optimization in the Digital Transition)

►▼

Show Figures

Figure 1

Open AccessArticle

An Objective Function-Based Clustering Algorithm with a Closed-Form Solution and Application to Reference Interval Estimation in Laboratory Medicine

by

Frank Klawonn and Georg Hoffmann

Algorithms 2024, 17(4), 143; https://doi.org/10.3390/a17040143 - 29 Mar 2024

Abstract

Clustering algorithms are usually iterative procedures. In particular, when the clustering algorithm aims to optimise an objective function like in k-means clustering or Gaussian mixture models, iterative heuristics are required due to the high non-linearity of the objective function. This implies higher

[...] Read more.

Clustering algorithms are usually iterative procedures. In particular, when the clustering algorithm aims to optimise an objective function like in k-means clustering or Gaussian mixture models, iterative heuristics are required due to the high non-linearity of the objective function. This implies higher computational costs and the risk of finding only a local optimum and not the global optimum of the objective function. In this paper, we demonstrate that in the case of one-dimensional clustering with one main and one noise cluster, one can formulate an objective function, which permits a closed-form solution with no need for an iteration scheme and the guarantee of finding the global optimum. We demonstrate how such an algorithm can be applied in the context of laboratory medicine as a method to estimate reference intervals that represent the range of “normal” values.

Full article

(This article belongs to the Special Issue Nature-Inspired Algorithms in Machine Learning (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

Dynamic Events in the Flexible Job-Shop Scheduling Problem: Rescheduling with a Hybrid Metaheuristic Algorithm

by

Shubhendu Kshitij Fuladi and Chang-Soo Kim

Algorithms 2024, 17(4), 142; https://doi.org/10.3390/a17040142 - 28 Mar 2024

Abstract

►▼

Show Figures

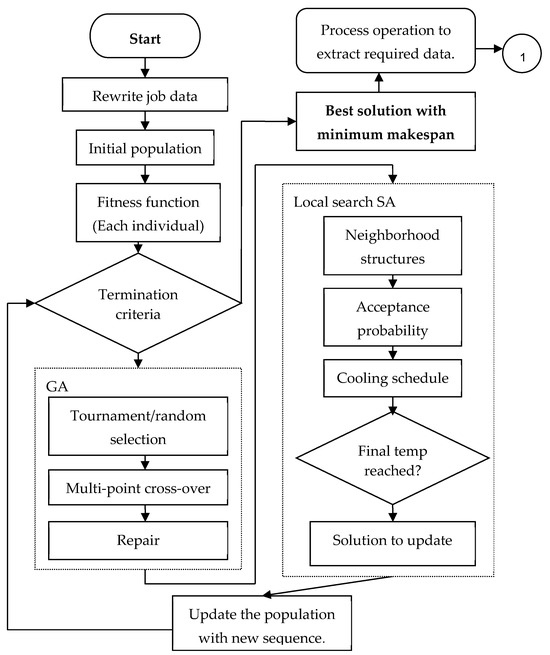

In the real world of manufacturing systems, production planning is crucial for organizing and optimizing various manufacturing process components. The objective of this paper is to present a methodology for both static scheduling and dynamic scheduling. In the proposed method, a hybrid algorithm

[...] Read more.

In the real world of manufacturing systems, production planning is crucial for organizing and optimizing various manufacturing process components. The objective of this paper is to present a methodology for both static scheduling and dynamic scheduling. In the proposed method, a hybrid algorithm is utilized to optimize the static flexible job-shop scheduling problem (FJSP) and dynamic flexible job-shop scheduling problem (DFJSP). This algorithm integrates the genetic algorithm (GA) as a global optimization technique with a simulated annealing (SA) algorithm serving as a local search optimization approach to accelerate convergence and prevent getting stuck in local minima. Additionally, variable neighborhood search (VNS) is utilized for efficient neighborhood search within this hybrid algorithm framework. For the FJSP, the proposed hybrid algorithm is simulated on a 40-benchmark dataset to evaluate its performance. Comparisons among the proposed hybrid algorithm and other algorithms are provided to show the effectiveness of the proposed algorithm, ensuring that the proposed hybrid algorithm can efficiently solve the FJSP, with 38 out of 40 instances demonstrating better results. The primary objective of this study is to perform dynamic scheduling on two datasets, including both single-purpose machine and multi-purpose machine datasets, using the proposed hybrid algorithm with a rescheduling strategy. By observing the results of the DFJSP, dynamic events such as a single machine breakdown, a single job arrival, multiple machine breakdowns, and multiple job arrivals demonstrate that the proposed hybrid algorithm with the rescheduling strategy achieves significant improvement and the proposed method obtains the best new solution, resulting in a significant decrease in makespan.

Full article

Figure 1

Journal Menu

► ▼ Journal Menu-

- Algorithms Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Algorithms, Computation, Entropy, Fractal Fract, MCA

Analytical and Numerical Methods for Stochastic Biological Systems

Topic Editors: Mehmet Yavuz, Necati Ozdemir, Mouhcine Tilioua, Yassine SabbarDeadline: 10 May 2024

Topic in

Algorithms, Diagnostics, Entropy, Information, J. Imaging

Application of Machine Learning in Molecular Imaging

Topic Editors: Allegra Conti, Nicola Toschi, Marianna Inglese, Andrea Duggento, Matthew Grech-Sollars, Serena Monti, Giancarlo Sportelli, Pietro CarraDeadline: 31 May 2024

Topic in

Algorithms, Axioms, Fractal Fract, Mathematics, Symmetry

Fractal and Design of Multipoint Iterative Methods for Nonlinear Problems

Topic Editors: Xiaofeng Wang, Fazlollah SoleymaniDeadline: 30 June 2024

Topic in

Algorithms, Computation, Information, Mathematics

Complex Networks and Social Networks

Topic Editors: Jie Meng, Xiaowei Huang, Minghui Qian, Zhixuan XuDeadline: 31 July 2024

Conferences

Special Issues

Special Issue in

Algorithms

Hybrid Intelligent Algorithms

Guest Editors: Grigorios Beligiannis, Efstratios F. Georgopoulos, Spiridon D. Likothanassis, Isidoros Perikos, Ioannis X. TassopoulosDeadline: 30 April 2024

Special Issue in

Algorithms

Bio-Inspired Algorithms

Guest Editors: Sándor Szénási, Gábor KertészDeadline: 20 May 2024

Special Issue in

Algorithms

Algorithms for Smart Cities

Guest Editors: Gloria Cerasela Crisan, Elena NechitaDeadline: 31 May 2024

Special Issue in

Algorithms

Algorithms for Games AI

Guest Editors: Wenxin Li, Haifeng ZhangDeadline: 20 June 2024

Topical Collections

Topical Collection in

Algorithms

Feature Papers in Algorithms for Multidisciplinary Applications

Collection Editor: Francesc Pozo

Topical Collection in

Algorithms

Feature Papers in Randomized, Online and Approximation Algorithms

Collection Editor: Frank Werner

Topical Collection in

Algorithms

Featured Reviews of Algorithms

Collection Editors: Arun Kumar Sangaiah, Xingjuan Cai