-

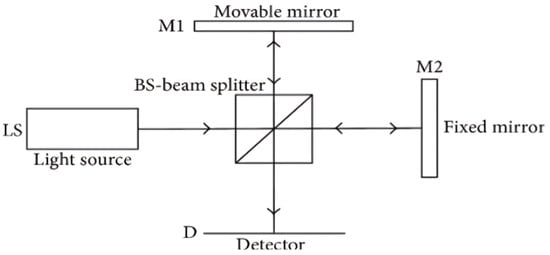

Source Camera Identification Techniques: A Survey

Source Camera Identification Techniques: A Survey -

Point Projection Mapping System for Tracking, Registering, Labeling, and Validating Optical Tissue Measurements

Point Projection Mapping System for Tracking, Registering, Labeling, and Validating Optical Tissue Measurements -

Identifying the Causes of Unexplained Dyspnea at High Altitude Using Normobaric Hypoxia with Echocardiography

Identifying the Causes of Unexplained Dyspnea at High Altitude Using Normobaric Hypoxia with Echocardiography -

Fast Data Generation for Training Deep-Learning 3D Reconstruction Approaches for Camera Arrays

Fast Data Generation for Training Deep-Learning 3D Reconstruction Approaches for Camera Arrays

Journal Description

Journal of Imaging

Journal of Imaging

is an international, multi/interdisciplinary, peer-reviewed, open access journal of imaging techniques published online monthly by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), PubMed, PMC, dblp, Inspec, Ei Compendex, and other databases.

- Journal Rank: CiteScore - Q2 (Computer Graphics and Computer-Aided Design)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 21.7 days after submission; acceptance to publication is undertaken in 3.8 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.2 (2022);

5-Year Impact Factor:

3.2 (2022)

Latest Articles

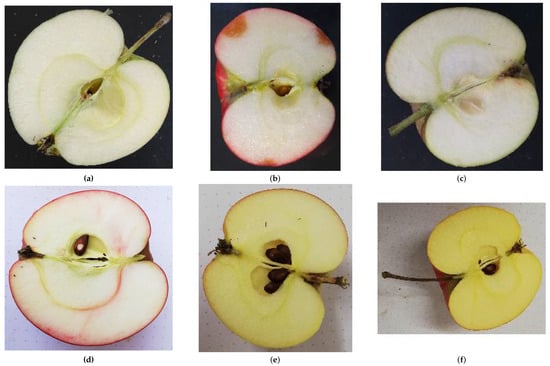

Enhancing Apple Cultivar Classification Using Multiview Images

J. Imaging 2024, 10(4), 94; https://doi.org/10.3390/jimaging10040094 - 17 Apr 2024

Abstract

Apple cultivar classification is challenging due to the inter-class similarity and high intra-class variations. Human experts do not rely on single-view features but rather study each viewpoint of the apple to identify a cultivar, paying close attention to various details. Following our previous

[...] Read more.

Apple cultivar classification is challenging due to the inter-class similarity and high intra-class variations. Human experts do not rely on single-view features but rather study each viewpoint of the apple to identify a cultivar, paying close attention to various details. Following our previous work, we try to establish a similar multiview approach for machine-learning (ML)-based apple classification in this paper. In our previous work, we studied apple classification using one single view. While these results were promising, it also became clear that one view alone might not contain enough information in the case of many classes or cultivars. Therefore, exploring multiview classification for this task is the next logical step. Multiview classification is nothing new, and we use state-of-the-art approaches as a base. Our goal is to find the best approach for the specific apple classification task and study what is achievable with the given methods towards our future goal of applying this on a mobile device without the need for internet connectivity. In this study, we compare an ensemble model with two cases where we use single networks: one without view specialization trained on all available images without view assignment and one where we combine the separate views into a single image of one specific instance. The two latter options reflect dataset organization and preprocessing to allow the use of smaller models in terms of stored weights and number of operations than an ensemble model. We compare the different approaches based on our custom apple cultivar dataset. The results show that the state-of-the-art ensemble provides the best result. However, using images with combined views shows a decrease in accuracy by 3% while requiring only 60% of the memory for weights. Thus, simpler approaches with enhanced preprocessing can open a trade-off for classification tasks on mobile devices.

Full article

(This article belongs to the Special Issue Computer Vision and Deep Learning: Trends and Applications (2nd Edition))

►

Show Figures

Open AccessReview

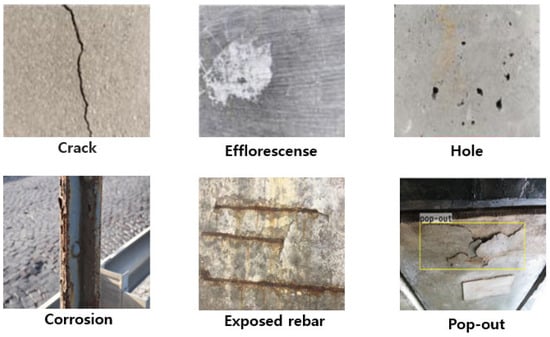

Review of Image-Processing-Based Technology for Structural Health Monitoring of Civil Infrastructures

by

Ji-Woo Kim, Hee-Wook Choi, Sung-Keun Kim and Wongi S. Na

J. Imaging 2024, 10(4), 93; https://doi.org/10.3390/jimaging10040093 - 16 Apr 2024

Abstract

The continuous monitoring of civil infrastructures is crucial for ensuring public safety and extending the lifespan of structures. In recent years, image-processing-based technologies have emerged as powerful tools for the structural health monitoring (SHM) of civil infrastructures. This review provides a comprehensive overview

[...] Read more.

The continuous monitoring of civil infrastructures is crucial for ensuring public safety and extending the lifespan of structures. In recent years, image-processing-based technologies have emerged as powerful tools for the structural health monitoring (SHM) of civil infrastructures. This review provides a comprehensive overview of the advancements, applications, and challenges associated with image processing in the field of SHM. The discussion encompasses various imaging techniques such as satellite imagery, Light Detection and Ranging (LiDAR), optical cameras, and other non-destructive testing methods. Key topics include the use of image processing for damage detection, crack identification, deformation monitoring, and overall structural assessment. This review explores the integration of artificial intelligence and machine learning techniques with image processing for enhanced automation and accuracy in SHM. By consolidating the current state of image-processing-based technology for SHM, this review aims to show the full potential of image-based approaches for researchers, engineers, and professionals involved in civil engineering, SHM, image processing, and related fields.

Full article

(This article belongs to the Special Issue Image Processing and Computer Vision: Algorithms and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

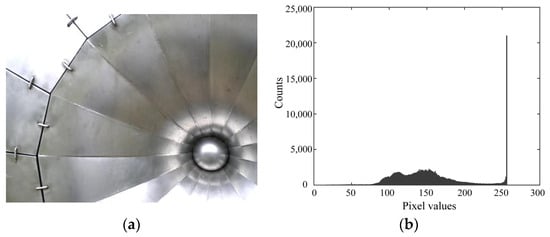

High Dynamic Range Image Reconstruction from Saturated Images of Metallic Objects

by

Shoji Tominaga and Takahiko Horiuchi

J. Imaging 2024, 10(4), 92; https://doi.org/10.3390/jimaging10040092 - 15 Apr 2024

Abstract

This study considers a method for reconstructing a high dynamic range (HDR) original image from a single saturated low dynamic range (LDR) image of metallic objects. A deep neural network approach was adopted for the direct mapping of an 8-bit LDR image to

[...] Read more.

This study considers a method for reconstructing a high dynamic range (HDR) original image from a single saturated low dynamic range (LDR) image of metallic objects. A deep neural network approach was adopted for the direct mapping of an 8-bit LDR image to HDR. An HDR image database was first constructed using a large number of various metallic objects with different shapes. Each captured HDR image was clipped to create a set of 8-bit LDR images. All pairs of HDR and LDR images were used to train and test the network. Subsequently, a convolutional neural network (CNN) was designed in the form of a deep U-Net-like architecture. The network consisted of an encoder, a decoder, and a skip connection to maintain high image resolution. The CNN algorithm was constructed using the learning functions in MATLAB. The entire network consisted of 32 layers and 85,900 learnable parameters. The performance of the proposed method was examined in experiments using a test image set. The proposed method was also compared with other methods and confirmed to be significantly superior in terms of reconstruction accuracy, histogram fitting, and psychological evaluation.

Full article

(This article belongs to the Special Issue Imaging Technologies for Understanding Material Appearance)

►▼

Show Figures

Figure 1

Open AccessArticle

Correlated Decision Fusion Accompanied with Quality Information on a Multi-Band Pixel Basis for Land Cover Classification

by

Spiros Papadopoulos, Georgia Koukiou and Vassilis Anastassopoulos

J. Imaging 2024, 10(4), 91; https://doi.org/10.3390/jimaging10040091 - 12 Apr 2024

Abstract

Decision fusion plays a crucial role in achieving a cohesive and unified outcome by merging diverse perspectives. Within the realm of remote sensing classification, these methodologies become indispensable when synthesizing data from multiple sensors to arrive at conclusive decisions. In our study, we

[...] Read more.

Decision fusion plays a crucial role in achieving a cohesive and unified outcome by merging diverse perspectives. Within the realm of remote sensing classification, these methodologies become indispensable when synthesizing data from multiple sensors to arrive at conclusive decisions. In our study, we leverage fully Polarimetric Synthetic Aperture Radar (PolSAR) and thermal infrared data to establish distinct decisions for each pixel pertaining to its land cover classification. To enhance the classification process, we employ Pauli’s decomposition components and land surface temperature as features. This approach facilitates the extraction of local decisions for each pixel, which are subsequently integrated through majority voting to form a comprehensive global decision for each land cover type. Furthermore, we investigate the correlation between corresponding pixels in the data from each sensor, aiming to achieve pixel-level correlated decision fusion at the fusion center. Our methodology entails a thorough exploration of the employed classifiers, coupled with the mathematical foundations necessary for the fusion of correlated decisions. Quality information is integrated into the decision fusion process, ensuring a comprehensive and robust classification outcome. The novelty of the method is its simplicity in the number of features used as well as the simple way of fusing decisions.

Full article

(This article belongs to the Special Issue Data Processing with Artificial Intelligence in Thermal Imagery)

►▼

Show Figures

Figure 1

Open AccessArticle

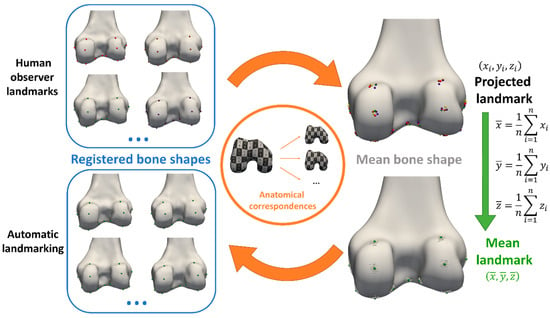

Automated Landmark Annotation for Morphometric Analysis of Distal Femur and Proximal Tibia

by

Jonas Grammens, Annemieke Van Haver, Imelda Lumban-Gaol, Femke Danckaers, Peter Verdonk and Jan Sijbers

J. Imaging 2024, 10(4), 90; https://doi.org/10.3390/jimaging10040090 - 11 Apr 2024

Abstract

Manual anatomical landmarking for morphometric knee bone characterization in orthopedics is highly time-consuming and shows high operator variability. Therefore, automation could be a substantial improvement for diagnostics and personalized treatments relying on landmark-based methods. Applications include implant sizing and planning, meniscal allograft sizing,

[...] Read more.

Manual anatomical landmarking for morphometric knee bone characterization in orthopedics is highly time-consuming and shows high operator variability. Therefore, automation could be a substantial improvement for diagnostics and personalized treatments relying on landmark-based methods. Applications include implant sizing and planning, meniscal allograft sizing, and morphological risk factor assessment. For twenty MRI-based 3D bone and cartilage models, anatomical landmarks were manually applied by three experts, and morphometric measurements for 3D characterization of the distal femur and proximal tibia were calculated from all observations. One expert performed the landmark annotations three times. Intra- and inter-observer variations were assessed for landmark position and measurements. The mean of the three expert annotations served as the ground truth. Next, automated landmark annotation was performed by elastic deformation of a template shape, followed by landmark optimization at extreme positions (highest/lowest/most medial/lateral point). The results of our automated annotation method were compared with ground truth, and percentages of landmarks and measurements adhering to different tolerances were calculated. Reliability was evaluated by the intraclass correlation coefficient (ICC). For the manual annotations, the inter-observer absolute difference was 1.53 ± 1.22 mm (mean ± SD) for the landmark positions and 0.56 ± 0.55 mm (mean ± SD) for the morphometric measurements. Automated versus manual landmark extraction differed by an average of 2.05 mm. The automated measurements demonstrated an absolute difference of 0.78 ± 0.60 mm (mean ± SD) from their manual counterparts. Overall, 92% of the automated landmarks were within 4 mm of the expert mean position, and 95% of all morphometric measurements were within 2 mm of the expert mean measurements. The ICC (manual versus automated) for automated morphometric measurements was between 0.926 and 1. Manual annotations required on average 18 min of operator interaction time, while automated annotations only needed 7 min of operator-independent computing time. Considering the time consumption and variability among observers, there is a clear need for a more efficient, standardized, and operator-independent algorithm. Our automated method demonstrated excellent accuracy and reliability for landmark positioning and morphometric measurements. Above all, this automated method will lead to a faster, scalable, and operator-independent morphometric analysis of the knee.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

Subjective Straylight Index: A Visual Test for Retinal Contrast Assessment as a Function of Veiling Glare

by

Francisco J. Ávila, Pilar Casado, Mª Concepción Marcellán, Laura Remón, Jorge Ares, Mª Victoria Collados and Sofía Otín

J. Imaging 2024, 10(4), 89; https://doi.org/10.3390/jimaging10040089 - 10 Apr 2024

Abstract

Spatial aspects of visual performance are usually evaluated through visual acuity charts and contrast sensitivity (CS) tests. CS tests are generated by vanishing the contrast level of the visual charts. However, the quality of retinal images can be affected by both ocular aberrations

[...] Read more.

Spatial aspects of visual performance are usually evaluated through visual acuity charts and contrast sensitivity (CS) tests. CS tests are generated by vanishing the contrast level of the visual charts. However, the quality of retinal images can be affected by both ocular aberrations and scattering effects and none of those factors are incorporated as parameters in visual tests in clinical practice. We propose a new computational methodology to generate visual acuity charts affected by ocular scattering effects. The generation of glare effects on the visual tests is reached by combining an ocular straylight meter methodology with the Commission Internationale de l’Eclairage’s (CIE) general disability glare formula. A new function for retinal contrast assessment is proposed, the subjective straylight function (SSF), which provides the maximum tolerance to the perception of straylight in an observed visual acuity test. Once the SSF is obtained, the subjective straylight index (SSI) is defined as the area under the SSF curve. Results report the normal values of the SSI in a population of 30 young healthy subjects (19 ± 1 years old), a peak centered at SSI = 0.46 of a normal distribution was found. SSI was also evaluated as a function of both spatial and temporal aspects of vision. Ocular wavefront measures revealed a statistical correlation of the SSI with defocus and trefoil terms. In addition, the time recovery (TR) after induced total disability glare and the SSI were related; in particular, the higher the RT, the greater the SSI value for high- and mid-contrast levels of the visual test. No relationships were found for low contrast visual targets. To conclude, a new computational method for retinal contrast assessment as a function of ocular straylight was proposed as a complementary subjective test for visual function performance.

Full article

(This article belongs to the Special Issue Advances in Retinal Image Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

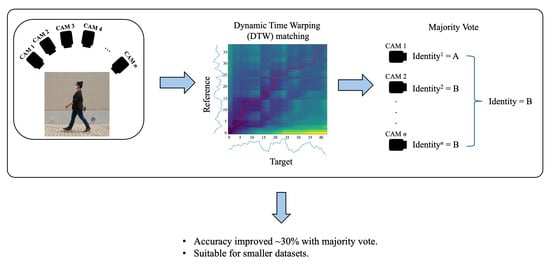

Multi-View Gait Analysis by Temporal Geometric Features of Human Body Parts

by

Thanyamon Pattanapisont, Kazunori Kotani, Prarinya Siritanawan, Toshiaki Kondo and Jessada Karnjana

J. Imaging 2024, 10(4), 88; https://doi.org/10.3390/jimaging10040088 - 09 Apr 2024

Abstract

A gait is a walking pattern that can help identify a person. Recently, gait analysis employed a vision-based pose estimation for further feature extraction. This research aims to identify a person by analyzing their walking pattern. Moreover, the authors intend to expand gait

[...] Read more.

A gait is a walking pattern that can help identify a person. Recently, gait analysis employed a vision-based pose estimation for further feature extraction. This research aims to identify a person by analyzing their walking pattern. Moreover, the authors intend to expand gait analysis for other tasks, e.g., the analysis of clinical, psychological, and emotional tasks. The vision-based human pose estimation method is used in this study to extract the joint angles and rank correlation between them. We deploy the multi-view gait databases for the experiment, i.e., CASIA-B and OUMVLP-Pose. The features are separated into three parts, i.e., whole, upper, and lower body features, to study the effect of the human body part features on an analysis of the gait. For person identity matching, a minimum Dynamic Time Warping (DTW) distance is determined. Additionally, we apply a majority voting algorithm to integrate the separated matching results from multiple cameras to enhance accuracy, and it improved up to approximately 30% compared to matching without majority voting.

Full article

(This article belongs to the Special Issue Image Processing and Computer Vision: Algorithms and Applications)

►▼

Show Figures

Graphical abstract

Open AccessArticle

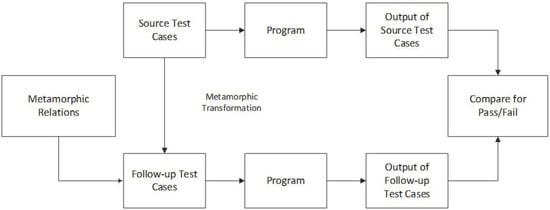

Measuring Effectiveness of Metamorphic Relations for Image Processing Using Mutation Testing

by

Fakeeha Jafari and Aamer Nadeem

J. Imaging 2024, 10(4), 87; https://doi.org/10.3390/jimaging10040087 - 06 Apr 2024

Abstract

►▼

Show Figures

Testing an intricate plexus of advanced software system architecture is quite challenging due to the absence of test oracle. Metamorphic testing is a popular technique to alleviate the test oracle problem. The effectiveness of metamorphic testing is dependent on metamorphic relations (MRs). MRs

[...] Read more.

Testing an intricate plexus of advanced software system architecture is quite challenging due to the absence of test oracle. Metamorphic testing is a popular technique to alleviate the test oracle problem. The effectiveness of metamorphic testing is dependent on metamorphic relations (MRs). MRs represent the essential properties of the system under test and are evaluated by their fault detection rates. The existing techniques for the evaluation of MRs are not comprehensive, as very few mutation operators are used to generate very few mutants. In this research, we have proposed six new MRs for dilation and erosion operations. The fault detection rate of six newly proposed MRs is determined using mutation testing. We have used eight applicable mutation operators and determined their effectiveness. By using these applicable operators, we have ensured that all the possible numbers of mutants are generated, which shows that all the faults in the system under test are fully identified. Results of the evaluation of four MRs for edge detection show an improvement in all the respective MRs, especially in MR1 and MR4, with a fault detection rate of 76.54% and 69.13%, respectively, which is 32% and 24% higher than the existing technique. The fault detection rate of MR2 and MR3 is also improved by 1%. Similarly, results of dilation and erosion show that out of 8 MRs, the fault detection rates of four MRs are higher than the existing technique. In the proposed technique, MR1 is improved by 39%, MR4 is improved by 0.5%, MR6 is improved by 17%, and MR8 is improved by 29%. We have also compared the results of our proposed MRs with the existing MRs of dilation and erosion operations. Results show that the proposed MRs complement the existing MRs effectively as the new MRs can find those faults that are not identified by the existing MRs.

Full article

Figure 1

Open AccessSystematic Review

Denoising of Optical Coherence Tomography Images in Ophthalmology Using Deep Learning: A Systematic Review

by

Hanya Ahmed, Qianni Zhang, Robert Donnan and Akram Alomainy

J. Imaging 2024, 10(4), 86; https://doi.org/10.3390/jimaging10040086 - 01 Apr 2024

Abstract

Imaging from optical coherence tomography (OCT) is widely used for detecting retinal diseases, localization of intra-retinal boundaries, etc. It is, however, degraded by speckle noise. Deep learning models can aid with denoising, allowing clinicians to clearly diagnose retinal diseases. Deep learning models can

[...] Read more.

Imaging from optical coherence tomography (OCT) is widely used for detecting retinal diseases, localization of intra-retinal boundaries, etc. It is, however, degraded by speckle noise. Deep learning models can aid with denoising, allowing clinicians to clearly diagnose retinal diseases. Deep learning models can be considered as an end-to-end framework. We selected denoising studies that used deep learning models with retinal OCT imagery. Each study was quality-assessed through image quality metrics (including the peak signal-to-noise ratio—PSNR, contrast-to-noise ratio—CNR, and structural similarity index metric—SSIM). Meta-analysis could not be performed due to heterogeneity in the methods of the studies and measurements of their performance. Multiple databases (including Medline via PubMed, Google Scholar, Scopus, Embase) and a repository (ArXiv) were screened for publications published after 2010, without any limitation on language. From the 95 potential studies identified, a total of 41 were evaluated thoroughly. Fifty-four of these studies were excluded after full text assessment depending on whether deep learning (DL) was utilized or the dataset and results were not effectively explained. Numerous types of OCT images are mentioned in this review consisting of public retinal image datasets utilized purposefully for denoising OCT images (n = 37) and the Optic Nerve Head (ONH) (n = 4). A wide range of image quality metrics was used; PSNR and SNR that ranged between 8 and 156 dB. The minority of studies (n = 8) showed a low risk of bias in all domains. Studies utilizing ONH images produced either a PSNR or SNR value varying from 8.1 to 25.7 dB, and that of public retinal datasets was 26.4 to 158.6 dB. Further analysis on denoising models was not possible due to discrepancies in reporting that did not allow useful pooling. An increasing number of studies have investigated denoising retinal OCT images using deep learning, with a range of architectures being implemented. The reported increase in image quality metrics seems promising, while study and reporting quality are currently low.

Full article

(This article belongs to the Special Issue Advances in Retinal Image Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Knowledge Distillation in Video-Based Human Action Recognition: An Intuitive Approach to Efficient and Flexible Model Training

by

Fernando Camarena, Miguel Gonzalez-Mendoza and Leonardo Chang

J. Imaging 2024, 10(4), 85; https://doi.org/10.3390/jimaging10040085 - 30 Mar 2024

Abstract

Training a model to recognize human actions in videos is computationally intensive. While modern strategies employ transfer learning methods to make the process more efficient, they still face challenges regarding flexibility and efficiency. Existing solutions are limited in functionality and rely heavily on

[...] Read more.

Training a model to recognize human actions in videos is computationally intensive. While modern strategies employ transfer learning methods to make the process more efficient, they still face challenges regarding flexibility and efficiency. Existing solutions are limited in functionality and rely heavily on pretrained architectures, which can restrict their applicability to diverse scenarios. Our work explores knowledge distillation (KD) for enhancing the training of self-supervised video models in three aspects: improving classification accuracy, accelerating model convergence, and increasing model flexibility under regular and limited-data scenarios. We tested our method on the UCF101 dataset using differently balanced proportions: 100%, 50%, 25%, and 2%. We found that using knowledge distillation to guide the model’s training outperforms traditional training without affecting the classification accuracy and while reducing the convergence rate of model training in standard settings and a data-scarce environment. Additionally, knowledge distillation enables cross-architecture flexibility, allowing model customization for various applications: from resource-limited to high-performance scenarios.

Full article

(This article belongs to the Special Issue Deep Learning in Computer Vision)

►▼

Show Figures

Figure 1

Open AccessArticle

Advanced Planar Projection Contour (PPC): A Novel Algorithm for Local Feature Description in Point Clouds

by

Wenbin Tang, Yinghao Lv, Yongdang Chen, Linqing Zheng and Runxiao Wang

J. Imaging 2024, 10(4), 84; https://doi.org/10.3390/jimaging10040084 - 29 Mar 2024

Abstract

►▼

Show Figures

Local feature description of point clouds is essential in 3D computer vision. However, many local feature descriptors for point clouds struggle with inadequate robustness, excessive dimensionality, and poor computational efficiency. To address these issues, we propose a novel descriptor based on Planar Projection

[...] Read more.

Local feature description of point clouds is essential in 3D computer vision. However, many local feature descriptors for point clouds struggle with inadequate robustness, excessive dimensionality, and poor computational efficiency. To address these issues, we propose a novel descriptor based on Planar Projection Contours, characterized by convex packet contour information. We construct the Local Reference Frame (LRF) through covariance analysis of the query point and its neighboring points. Neighboring points are projected onto three orthogonal planes defined by the LRF. These projection points on the planes are fitted into convex hull contours and encoded as local features. These planar features are then concatenated to create the Planar Projection Contour (PPC) descriptor. We evaluated the performance of the PPC descriptor against classical descriptors using the B3R, UWAOR, and Kinect datasets. Experimental results demonstrate that the PPC descriptor achieves an accuracy exceeding 80% across all recall levels, even under high-noise and point density variation conditions, underscoring its effectiveness and robustness.

Full article

Figure 1

Open AccessReview

Human Attention Restoration, Flow, and Creativity: A Conceptual Integration

by

Teresa P. Pham and Thomas Sanocki

J. Imaging 2024, 10(4), 83; https://doi.org/10.3390/jimaging10040083 - 29 Mar 2024

Abstract

In today’s fast paced, attention-demanding society, executive functions and attentional resources are often taxed. Individuals need ways to sustain and restore these resources. We first review the concepts of attention and restoration, as instantiated in Attention Restoration Theory (ART). ART emphasizes the role

[...] Read more.

In today’s fast paced, attention-demanding society, executive functions and attentional resources are often taxed. Individuals need ways to sustain and restore these resources. We first review the concepts of attention and restoration, as instantiated in Attention Restoration Theory (ART). ART emphasizes the role of nature in restoring attention. We then discuss the essentials of experiments on the causal influences of nature. Next, we expand the concept of ART to include modern, designed environments. We outline a wider perspective termed attentional ecology, in which attention behavior is viewed within a larger system involving the human and their interactions with environmental demands over time. When the ecology is optimal, mental functioning can be a positive “flow” that is productive, sustainable for the individual, and sometimes creative.

Full article

(This article belongs to the Special Issue Human Attention and Visual Cognition (Volume II))

►▼

Show Figures

Figure 1

Open AccessArticle

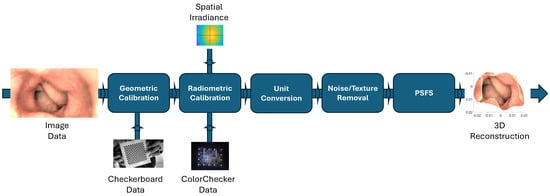

Single-Image-Based 3D Reconstruction of Endoscopic Images

by

Bilal Ahmad, Pål Anders Floor, Ivar Farup and Casper Find Andersen

J. Imaging 2024, 10(4), 82; https://doi.org/10.3390/jimaging10040082 - 28 Mar 2024

Abstract

A wireless capsule endoscope (WCE) is a medical device designed for the examination of the human gastrointestinal (GI) tract. Three-dimensional models based on WCE images can assist in diagnostics by effectively detecting pathology. These 3D models provide gastroenterologists with improved visualization, particularly in

[...] Read more.

A wireless capsule endoscope (WCE) is a medical device designed for the examination of the human gastrointestinal (GI) tract. Three-dimensional models based on WCE images can assist in diagnostics by effectively detecting pathology. These 3D models provide gastroenterologists with improved visualization, particularly in areas of specific interest. However, the constraints of WCE, such as lack of controllability, and requiring expensive equipment for operation, which is often unavailable, pose significant challenges when it comes to conducting comprehensive experiments aimed at evaluating the quality of 3D reconstruction from WCE images. In this paper, we employ a single-image-based 3D reconstruction method on an artificial colon captured with an endoscope that behaves like WCE. The shape from shading (SFS) algorithm can reconstruct the 3D shape using a single image. Therefore, it has been employed to reconstruct the 3D shapes of the colon images. The camera of the endoscope has also been subjected to comprehensive geometric and radiometric calibration. Experiments are conducted on well-defined primitive objects to assess the method’s robustness and accuracy. This evaluation involves comparing the reconstructed 3D shapes of primitives with ground truth data, quantified through measurements of root-mean-square error and maximum error. Afterward, the same methodology is applied to recover the geometry of the colon. The results demonstrate that our approach is capable of reconstructing the geometry of the colon captured with a camera with an unknown imaging pipeline and significant noise in the images. The same procedure is applied on WCE images for the purpose of 3D reconstruction. Preliminary results are subsequently generated to illustrate the applicability of our method for reconstructing 3D models from WCE images.

Full article

(This article belongs to the Special Issue Geometry Reconstruction from Images (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessReview

Applied Artificial Intelligence in Healthcare: A Review of Computer Vision Technology Application in Hospital Settings

by

Heidi Lindroth, Keivan Nalaie, Roshini Raghu, Ivan N. Ayala, Charles Busch, Anirban Bhattacharyya, Pablo Moreno Franco, Daniel A. Diedrich, Brian W. Pickering and Vitaly Herasevich

J. Imaging 2024, 10(4), 81; https://doi.org/10.3390/jimaging10040081 - 28 Mar 2024

Abstract

Computer vision (CV), a type of artificial intelligence (AI) that uses digital videos or a sequence of images to recognize content, has been used extensively across industries in recent years. However, in the healthcare industry, its applications are limited by factors like privacy,

[...] Read more.

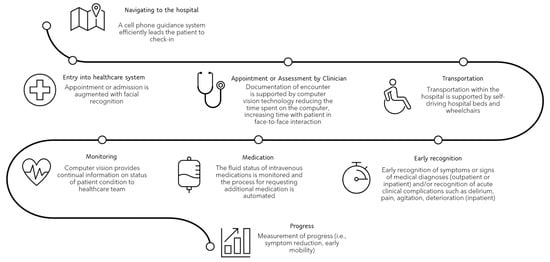

Computer vision (CV), a type of artificial intelligence (AI) that uses digital videos or a sequence of images to recognize content, has been used extensively across industries in recent years. However, in the healthcare industry, its applications are limited by factors like privacy, safety, and ethical concerns. Despite this, CV has the potential to improve patient monitoring, and system efficiencies, while reducing workload. In contrast to previous reviews, we focus on the end-user applications of CV. First, we briefly review and categorize CV applications in other industries (job enhancement, surveillance and monitoring, automation, and augmented reality). We then review the developments of CV in the hospital setting, outpatient, and community settings. The recent advances in monitoring delirium, pain and sedation, patient deterioration, mechanical ventilation, mobility, patient safety, surgical applications, quantification of workload in the hospital, and monitoring for patient events outside the hospital are highlighted. To identify opportunities for future applications, we also completed journey mapping at different system levels. Lastly, we discuss the privacy, safety, and ethical considerations associated with CV and outline processes in algorithm development and testing that limit CV expansion in healthcare. This comprehensive review highlights CV applications and ideas for its expanded use in healthcare.

Full article

(This article belongs to the Special Issue Computer Vision and Deep Learning: Trends and Applications (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

Magnetoencephalography Atlas Viewer for Dipole Localization and Viewing

by

N.C.d. Fonseca, Jason Bowerman, Pegah Askari, Amy L. Proskovec, Fabricio Stewan Feltrin, Daniel Veltkamp, Heather Early, Ben C. Wagner, Elizabeth M. Davenport and Joseph A. Maldjian

J. Imaging 2024, 10(4), 80; https://doi.org/10.3390/jimaging10040080 - 28 Mar 2024

Abstract

Magnetoencephalography (MEG) is a noninvasive neuroimaging technique widely recognized for epilepsy and tumor mapping. MEG clinical reporting requires a multidisciplinary team, including expert input regarding each dipole’s anatomic localization. Here, we introduce a novel tool, the “Magnetoencephalography Atlas Viewer” (MAV), which streamlines this

[...] Read more.

Magnetoencephalography (MEG) is a noninvasive neuroimaging technique widely recognized for epilepsy and tumor mapping. MEG clinical reporting requires a multidisciplinary team, including expert input regarding each dipole’s anatomic localization. Here, we introduce a novel tool, the “Magnetoencephalography Atlas Viewer” (MAV), which streamlines this anatomical analysis. The MAV normalizes the patient’s Magnetic Resonance Imaging (MRI) to the Montreal Neurological Institute (MNI) space, reverse-normalizes MNI atlases to the native MRI, identifies MEG dipole files, and matches dipoles’ coordinates to their spatial location in atlas files. It offers a user-friendly and interactive graphical user interface (GUI) for displaying individual dipoles, groups, coordinates, anatomical labels, and a tri-planar MRI view of the patient with dipole overlays. It evaluated over 273 dipoles obtained in clinical epilepsy subjects. Consensus-based ground truth was established by three neuroradiologists, with a minimum agreement threshold of two. The concordance between the ground truth and MAV labeling ranged from 79% to 84%, depending on the normalization method. Higher concordance rates were observed in subjects with minimal or no structural abnormalities on the MRI, ranging from 80% to 90%. The MAV provides a straightforward MEG dipole anatomic localization method, allowing a nonspecialist to prepopulate a report, thereby facilitating and reducing the time of clinical reporting.

Full article

(This article belongs to the Section Neuroimaging and Neuroinformatics)

►▼

Show Figures

Figure 1

Open AccessArticle

Zero-Shot Sketch-Based Image Retrieval Using StyleGen and Stacked Siamese Neural Networks

by

Venkata Rama Muni Kumar Gopu and Madhavi Dunna

J. Imaging 2024, 10(4), 79; https://doi.org/10.3390/jimaging10040079 - 27 Mar 2024

Abstract

►▼

Show Figures

Sketch-based image retrieval (SBIR) refers to a sub-class of content-based image retrieval problems where the input queries are ambiguous sketches and the retrieval repository is a database of natural images. In the zero-shot setup of SBIR, the query sketches are drawn from classes

[...] Read more.

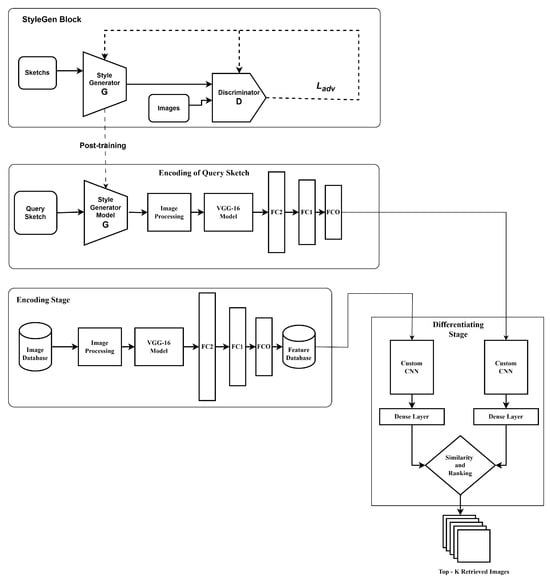

Sketch-based image retrieval (SBIR) refers to a sub-class of content-based image retrieval problems where the input queries are ambiguous sketches and the retrieval repository is a database of natural images. In the zero-shot setup of SBIR, the query sketches are drawn from classes that do not match any of those that were used in model building. The SBIR task is extremely challenging as it is a cross-domain retrieval problem, unlike content-based image retrieval problems because sketches and images have a huge domain gap. In this work, we propose an elegant retrieval methodology, StyleGen, for generating fake candidate images that match the domain of the repository images, thus reducing the domain gap for retrieval tasks. The retrieval methodology makes use of a two-stage neural network architecture known as the stacked Siamese network, which is known to provide outstanding retrieval performance without losing the generalizability of the approach. Experimental studies on the image sketch datasets TU-Berlin Extended and Sketchy Extended, evaluated using the mean average precision (mAP) metric, demonstrate a marked performance improvement compared to the current state-of-the-art approaches in the domain.

Full article

Figure 1

Open AccessArticle

Real-Time Dynamic Intelligent Image Recognition and Tracking System for Rockfall Disasters

by

Yu-Wei Lin, Chu-Fu Chiu, Li-Hsien Chen and Chao-Ching Ho

J. Imaging 2024, 10(4), 78; https://doi.org/10.3390/jimaging10040078 - 26 Mar 2024

Abstract

Taiwan, frequently affected by extreme weather causing phenomena such as earthquakes and typhoons, faces a high incidence of rockfall disasters due to its largely mountainous terrain. These disasters have led to numerous casualties, government compensation cases, and significant transportation safety impacts. According to

[...] Read more.

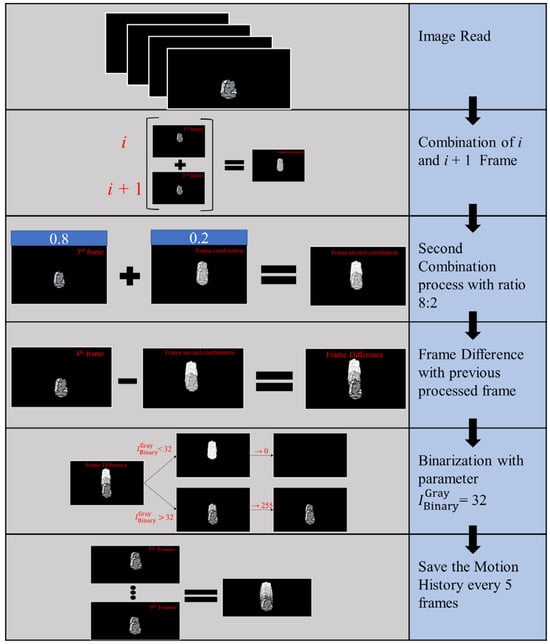

Taiwan, frequently affected by extreme weather causing phenomena such as earthquakes and typhoons, faces a high incidence of rockfall disasters due to its largely mountainous terrain. These disasters have led to numerous casualties, government compensation cases, and significant transportation safety impacts. According to the National Science and Technology Center for Disaster Reduction records from 2010 to 2022, 421 out of 866 soil and rock disasters occurred in eastern Taiwan, causing traffic disruptions due to rockfalls. Since traditional sensors of disaster detectors only record changes after a rockfall, there is no system in place to detect rockfalls as they occur. To combat this, a rockfall detection and tracking system using deep learning and image processing technology was developed. This system includes a real-time image tracking and recognition system that integrates YOLO and image processing technology. It was trained on a self-collected dataset of 2490 high-resolution RGB images. The system’s performance was evaluated on 30 videos featuring various rockfall scenarios. It achieved a mean Average Precision (mAP50) of 0.845 and mAP50-95 of 0.41, with a processing time of 125 ms. Tested on advanced hardware, the system proves effective in quickly tracking and identifying hazardous rockfalls, offering a significant advancement in disaster management and prevention.

Full article

(This article belongs to the Special Issue From Imaging to Understanding: Methods and Application for Environment, Infrastructure and Human Monitoring)

►▼

Show Figures

Figure 1

Open AccessArticle

An Efficient CNN-Based Method for Intracranial Hemorrhage Segmentation from Computerized Tomography Imaging

by

Quoc Tuan Hoang, Xuan Hien Pham, Xuan Thang Trinh, Anh Vu Le, Minh V. Bui and Trung Thanh Bui

J. Imaging 2024, 10(4), 77; https://doi.org/10.3390/jimaging10040077 - 25 Mar 2024

Abstract

Intracranial hemorrhage (ICH) resulting from traumatic brain injury is a serious issue, often leading to death or long-term disability if not promptly diagnosed. Currently, doctors primarily use Computerized Tomography (CT) scans to detect and precisely locate a hemorrhage, typically interpreted by radiologists. However,

[...] Read more.

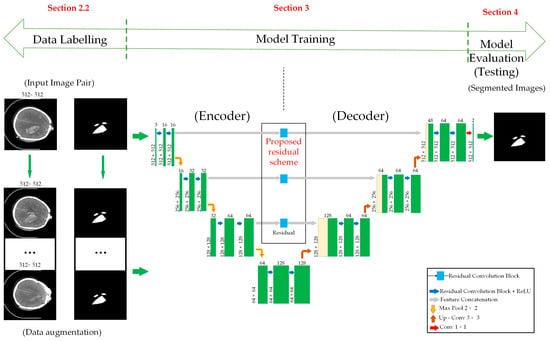

Intracranial hemorrhage (ICH) resulting from traumatic brain injury is a serious issue, often leading to death or long-term disability if not promptly diagnosed. Currently, doctors primarily use Computerized Tomography (CT) scans to detect and precisely locate a hemorrhage, typically interpreted by radiologists. However, this diagnostic process heavily relies on the expertise of medical professionals. To address potential errors, computer-aided diagnosis systems have been developed. In this study, we propose a new method that enhances the localization and segmentation of ICH lesions in CT scans by using multiple images created through different data augmentation techniques. We integrate residual connections into a U-Net-based segmentation network to improve the training efficiency. Our experiments, based on 82 CT scans from traumatic brain injury patients, validate the effectiveness of our approach, achieving an IOU score of 0.807 ± 0.03 for ICH segmentation using 10-fold cross-validation.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

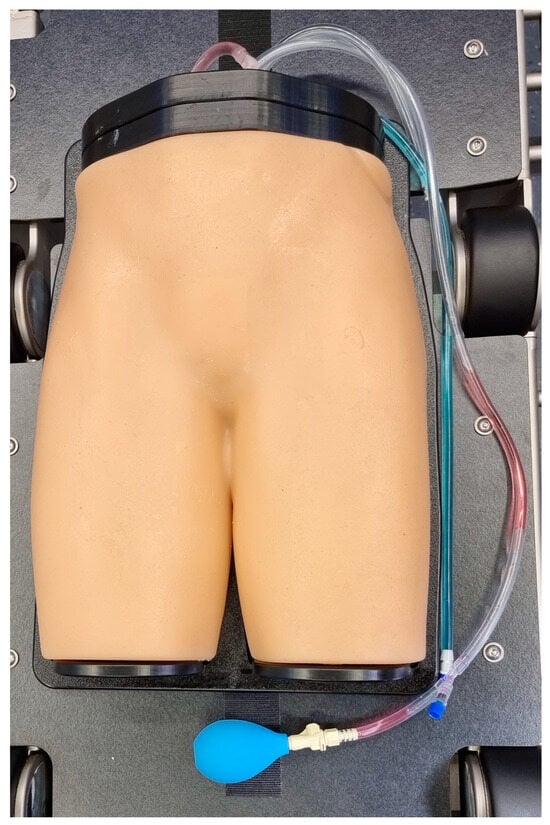

Comparing Different Registration and Visualization Methods for Navigated Common Femoral Arterial Access—A Phantom Model Study Using Mixed Reality

by

Johannes Hatzl, Daniel Henning, Dittmar Böckler, Niklas Hartmann, Katrin Meisenbacher and Christian Uhl

J. Imaging 2024, 10(4), 76; https://doi.org/10.3390/jimaging10040076 - 25 Mar 2024

Abstract

Mixed reality (MxR) enables the projection of virtual three-dimensional objects into the user’s field of view via a head-mounted display (HMD). This phantom model study investigated three different workflows for navigated common femoral arterial (CFA) access and compared it to a conventional sonography-guided

[...] Read more.

Mixed reality (MxR) enables the projection of virtual three-dimensional objects into the user’s field of view via a head-mounted display (HMD). This phantom model study investigated three different workflows for navigated common femoral arterial (CFA) access and compared it to a conventional sonography-guided technique as a control. A total of 160 punctures were performed by 10 operators (5 experts and 5 non-experts). A successful CFA puncture was defined as puncture at the mid-level of the femoral head with the needle tip at the central lumen line in a 0° coronary insertion angle and a 45° sagittal insertion angle. Positional errors were quantified using cone-beam computed tomography following each attempt. Mixed effect modeling revealed that the distance from the needle entry site to the mid-level of the femoral head is significantly shorter for navigated techniques than for the control group. This highlights that three-dimensional visualization could increase the safety of CFA access. However, the navigated workflows are infrastructurally complex with limited usability and are associated with relevant cost. While navigated techniques appear as a potentially beneficial adjunct for safe CFA access, future developments should aim to reduce workflow complexity, avoid optical tracking systems, and offer more pragmatic methods of registration and instrument tracking.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessReview

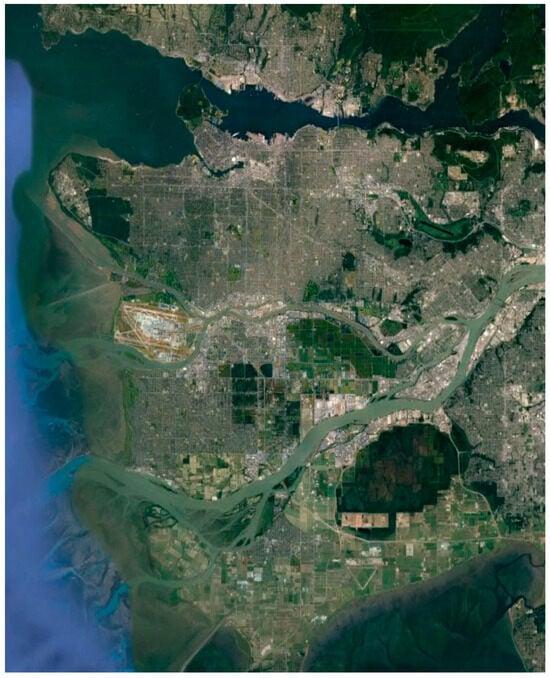

A Review on PolSAR Decompositions for Feature Extraction

by

Konstantinos Karachristos, Georgia Koukiou and Vassilis Anastassopoulos

J. Imaging 2024, 10(4), 75; https://doi.org/10.3390/jimaging10040075 - 24 Mar 2024

Abstract

Feature extraction plays a pivotal role in processing remote sensing datasets, especially in the realm of fully polarimetric data. This review investigates a variety of polarimetric decomposition techniques aimed at extracting comprehensive information from polarimetric imagery. These techniques are categorized as coherent and

[...] Read more.

Feature extraction plays a pivotal role in processing remote sensing datasets, especially in the realm of fully polarimetric data. This review investigates a variety of polarimetric decomposition techniques aimed at extracting comprehensive information from polarimetric imagery. These techniques are categorized as coherent and non-coherent methods, depending on their assumptions about the distribution of information among polarimetric cells. The review explores well-established and innovative approaches in polarimetric decomposition within both categories. It begins with a thorough examination of the foundational Pauli decomposition, a key algorithm in this field. Within the coherent category, the Cameron target decomposition is extensively explored, shedding light on its underlying principles. Transitioning to the non-coherent domain, the review investigates the Freeman–Durden decomposition and its extension, the Yamaguchi’s approach. Additionally, the widely recognized eigenvector–eigenvalue decomposition introduced by Cloude and Pottier is scrutinized. Furthermore, each method undergoes experimental testing on the benchmark dataset of the broader Vancouver area, offering a robust analysis of their efficacy. The primary objective of this review is to systematically present well-established polarimetric decomposition algorithms, elucidating the underlying mathematical foundations of each. The aim is to facilitate a profound understanding of these approaches, coupled with insights into potential combinations for diverse applications.

Full article

(This article belongs to the Section Visualization and Computer Graphics)

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Algorithms, Diagnostics, Entropy, Information, J. Imaging

Application of Machine Learning in Molecular Imaging

Topic Editors: Allegra Conti, Nicola Toschi, Marianna Inglese, Andrea Duggento, Matthew Grech-Sollars, Serena Monti, Giancarlo Sportelli, Pietro CarraDeadline: 31 May 2024

Topic in

Applied Sciences, Computation, Entropy, J. Imaging

Color Image Processing: Models and Methods (CIP: MM)

Topic Editors: Giuliana Ramella, Isabella TorcicolloDeadline: 30 July 2024

Topic in

Applied Sciences, Sensors, J. Imaging, MAKE

Applications in Image Analysis and Pattern Recognition

Topic Editors: Bin Fan, Wenqi RenDeadline: 31 August 2024

Topic in

Applied Sciences, Electronics, J. Imaging, MAKE, Remote Sensing

Computational Intelligence in Remote Sensing: 2nd Edition

Topic Editors: Yue Wu, Kai Qin, Maoguo Gong, Qiguang MiaoDeadline: 31 December 2024

Conferences

Special Issues

Special Issue in

J. Imaging

Advances and Challenges in Multimodal Machine Learning 2nd Edition

Guest Editor: Georgina CosmaDeadline: 30 April 2024

Special Issue in

J. Imaging

Modelling of Human Visual System in Image Processing

Guest Editors: Alexey Mashtakov, Edoardo ProvenziDeadline: 24 May 2024

Special Issue in

J. Imaging

The Mixed Reality Revolution: Challenges and Prospects 2nd Edition

Guest Editors: Sébastien Mavromatis, Jean SequeiraDeadline: 31 May 2024

Special Issue in

J. Imaging

Advances and Challenges in Multimodal Machine Learning

Guest Editor: Georgina CosmaDeadline: 30 June 2024