-

Evaluating the Performance of Automated Machine Learning (AutoML) Tools for Heart Disease Diagnosis and Prediction

Evaluating the Performance of Automated Machine Learning (AutoML) Tools for Heart Disease Diagnosis and Prediction -

Chat GPT in Diagnostic Human Pathology: Will It Be Useful to Pathologists? A Preliminary Review with ‘Query Session’ and Future Perspectives

Chat GPT in Diagnostic Human Pathology: Will It Be Useful to Pathologists? A Preliminary Review with ‘Query Session’ and Future Perspectives -

A Comprehensive Review of AI Techniques for Addressing Algorithmic Bias in Job Hiring

A Comprehensive Review of AI Techniques for Addressing Algorithmic Bias in Job Hiring

Journal Description

AI

AI

is an international, peer-reviewed, open access journal on artificial intelligence (AI), including broad aspects of cognition and reasoning, perception and planning, machine learning, intelligent robotics, and applications of AI, published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within ESCI (Web of Science), Scopus, EBSCO, and other databases.

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 20.8 days after submission; acceptance to publication is undertaken in 5.8 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: APC discount vouchers, optional signed peer review, and reviewer names published annually in the journal.

Latest Articles

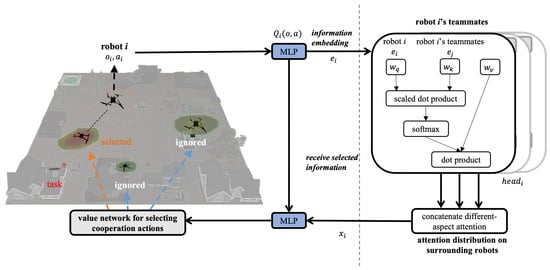

Development of an Attention Mechanism for Task-Adaptive Heterogeneous Robot Teaming

AI 2024, 5(2), 555-575; https://doi.org/10.3390/ai5020029 - 23 Apr 2024

Abstract

►

Show Figures

The allure of team scale and functional diversity has led to the promising adoption of heterogeneous multi-robot systems (HMRS) in complex, large-scale operations such as disaster search and rescue, site surveillance, and social security. These systems, which coordinate multiple robots of varying functions

[...] Read more.

The allure of team scale and functional diversity has led to the promising adoption of heterogeneous multi-robot systems (HMRS) in complex, large-scale operations such as disaster search and rescue, site surveillance, and social security. These systems, which coordinate multiple robots of varying functions and quantities, face the significant challenge of accurately assembling robot teams that meet the dynamic needs of tasks with respect to size and functionality, all while maintaining minimal resource expenditure. This paper introduces a pioneering adaptive cooperation method named inner attention (innerATT), crafted to dynamically configure teams of heterogeneous robots in response to evolving task types and environmental conditions. The innerATT method is articulated through the integration of an innovative attention mechanism within a multi-agent actor–critic reinforcement learning framework, enabling the strategic analysis of robot capabilities to efficiently form teams that fulfill specific task demands. To demonstrate the efficacy of innerATT in facilitating cooperation, experimental scenarios encompassing variations in task type (“Single Task”, “Double Task”, and “Mixed Task”) and robot availability are constructed under the themes of “task variety” and “robot availability variety.” The findings affirm that innerATT significantly enhances flexible cooperation, diminishes resource usage, and bolsters robustness in task fulfillment.

Full article

Open AccessEditorial

Artificial Intelligence in Healthcare: ChatGPT and Beyond

by

Tim Hulsen

AI 2024, 5(2), 550-554; https://doi.org/10.3390/ai5020028 - 19 Apr 2024

Abstract

Artificial intelligence (AI), the simulation of human intelligence processes by machines, is having a growing impact on healthcare [...]

Full article

(This article belongs to the Special Issue Artificial Intelligence in Healthcare: Current State and Future Perspectives)

Open AccessArticle

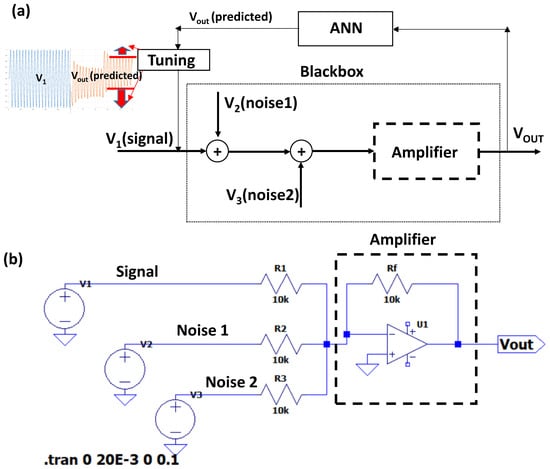

ANNs Predicting Noisy Signals in Electronic Circuits: A Model Predicting the Signal Trend in Amplification Systems

by

Alessandro Massaro

AI 2024, 5(2), 533-549; https://doi.org/10.3390/ai5020027 - 17 Apr 2024

Abstract

►▼

Show Figures

In the proposed paper, an artificial neural network (ANN) algorithm is applied to predict the electronic circuit outputs of voltage signals in Industry 4.0/5.0 scenarios. This approach is suitable to predict possible uncorrected behavior of control circuits affected by unknown noises, and to

[...] Read more.

In the proposed paper, an artificial neural network (ANN) algorithm is applied to predict the electronic circuit outputs of voltage signals in Industry 4.0/5.0 scenarios. This approach is suitable to predict possible uncorrected behavior of control circuits affected by unknown noises, and to reproduce a testbed method simulating the noise effect influencing the amplification of an input sinusoidal voltage signal, which is a basic and fundamental signal for controlled manufacturing systems. The performed simulations take into account different noise signals changing their time-domain trend and frequency behavior to prove the possibility of predicting voltage outputs when complex signals are considered at the control circuit input, including additive disturbs and noises. The results highlight that it is possible to construct a good ANN training model by processing only the registered voltage output signals without considering the noise profile (which is typically unknown). The proposed model behaves as an electronic black box for Industry 5.0 manufacturing processes automating circuit and machine tuning procedures. By analyzing state-of-the-art ANNs, the study offers an innovative ANN-based versatile solution that is able to process various noise profiles without requiring prior knowledge of the noise characteristics.

Full article

Figure 1

Open AccessReview

Fetal Hypoxia Detection Using Machine Learning: A Narrative Review

by

Nawaf Alharbi, Mustafa Youldash, Duha Alotaibi, Haya Aldossary, Reema Albrahim, Reham Alzahrani, Wahbia Ahmed Saleh, Sunday O. Olatunji and May Issa Aldossary

AI 2024, 5(2), 516-532; https://doi.org/10.3390/ai5020026 - 13 Apr 2024

Abstract

Fetal hypoxia is a condition characterized by a lack of oxygen supply in a developing fetus in the womb. It can cause potential risks, leading to abnormalities, birth defects, and even mortality. Cardiotocograph (CTG) monitoring is among the techniques that can detect any

[...] Read more.

Fetal hypoxia is a condition characterized by a lack of oxygen supply in a developing fetus in the womb. It can cause potential risks, leading to abnormalities, birth defects, and even mortality. Cardiotocograph (CTG) monitoring is among the techniques that can detect any signs of fetal distress, including hypoxia. Due to the critical importance of interpreting the results of this test, it is essential to accompany these tests with the evolving available technology to classify cases of hypoxia into three cases: normal, suspicious, or pathological. Furthermore, Machine Learning (ML) is a blossoming technique constantly developing and aiding in medical studies, particularly fetal health prediction. Notwithstanding the past endeavors of health providers to detect hypoxia in fetuses, implementing ML and Deep Learning (DL) techniques ensures more timely and precise detection of fetal hypoxia by efficiently and accurately processing complex patterns in large datasets. Correspondingly, this review paper aims to explore the application of artificial intelligence models using cardiotocographic test data. The anticipated outcome of this review is to introduce guidance for future studies to enhance accuracy in detecting cases categorized within the suspicious class, an aspect that has encountered challenges in previous studies that holds significant implications for obstetricians in effectively monitoring fetal health and making informed decisions.

Full article

(This article belongs to the Section Medical & Healthcare AI)

►▼

Show Figures

Figure 1

Open AccessArticle

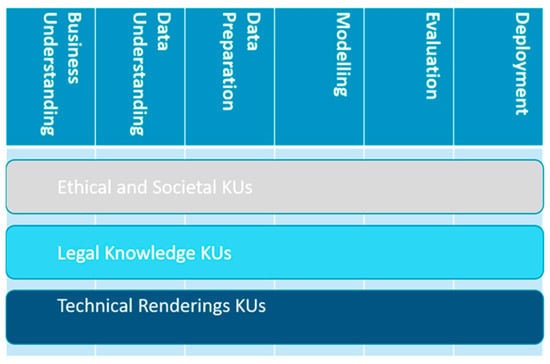

Towards an ELSA Curriculum for Data Scientists

by

Maria Christoforaki and Oya Deniz Beyan

AI 2024, 5(2), 504-515; https://doi.org/10.3390/ai5020025 - 11 Apr 2024

Abstract

The use of artificial intelligence (AI) applications in a growing number of domains in recent years has put into focus the ethical, legal, and societal aspects (ELSA) of these technologies and the relevant challenges they pose. In this paper, we propose an ELSA

[...] Read more.

The use of artificial intelligence (AI) applications in a growing number of domains in recent years has put into focus the ethical, legal, and societal aspects (ELSA) of these technologies and the relevant challenges they pose. In this paper, we propose an ELSA curriculum for data scientists aiming to raise awareness about ELSA challenges in their work, provide them with a common language with the relevant domain experts in order to cooperate to find appropriate solutions, and finally, incorporate ELSA in the data science workflow. ELSA should not be seen as an impediment or a superfluous artefact but rather as an integral part of the Data Science Project Lifecycle. The proposed curriculum uses the CRISP-DM (CRoss-Industry Standard Process for Data Mining) model as a backbone to define a vertical partition expressed in modules corresponding to the CRISP-DM phases. The horizontal partition includes knowledge units belonging to three strands that run through the phases, namely ethical and societal, legal and technical rendering knowledge units (KUs). In addition to the detailed description of the aforementioned KUs, we also discuss their implementation, issues such as duration, form, and evaluation of participants, as well as the variance of the knowledge level and needs of the target audience.

Full article

(This article belongs to the Special Issue Standards and Ethics in AI)

►▼

Show Figures

Figure 1

Open AccessArticle

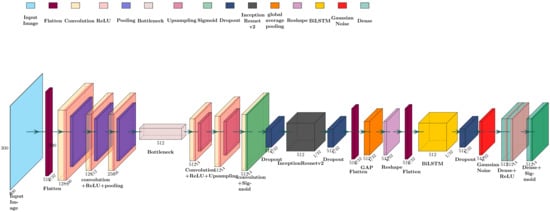

ECARRNet: An Efficient LSTM-Based Ensembled Deep Neural Network Architecture for Railway Fault Detection

by

Salman Ibne Eunus, Shahriar Hossain, A. E. M. Ridwan, Ashik Adnan, Md. Saiful Islam, Dewan Ziaul Karim, Golam Rabiul Alam and Jia Uddin

AI 2024, 5(2), 482-503; https://doi.org/10.3390/ai5020024 - 08 Apr 2024

Abstract

►▼

Show Figures

Accidents due to defective railway lines and derailments are common disasters that are observed frequently in Southeast Asian countries. It is imperative to run proper diagnosis over the detection of such faults to prevent such accidents. However, manual detection of such faults periodically

[...] Read more.

Accidents due to defective railway lines and derailments are common disasters that are observed frequently in Southeast Asian countries. It is imperative to run proper diagnosis over the detection of such faults to prevent such accidents. However, manual detection of such faults periodically can be both time-consuming and costly. In this paper, we have proposed a Deep Learning (DL)-based algorithm for automatic fault detection in railway tracks, which we termed an Ensembled Convolutional Autoencoder ResNet-based Recurrent Neural Network (ECARRNet). We compared its output with existing DL techniques in the form of several pre-trained DL models to investigate railway tracks and determine whether they are defective or not while considering commonly prevalent faults such as—defects in rails and fasteners. Moreover, we manually collected the images from different railway tracks situated in Bangladesh and made our dataset. After comparing our proposed model with the existing models, we found that our proposed architecture has produced the highest accuracy among all the previously existing state-of-the-art (SOTA) architecture, with an accuracy of 93.28% on the full dataset. Additionally, we split our dataset into two parts having two different types of faults, which are fasteners and rails. We ran the models on those two separate datasets, obtaining accuracies of 98.59% and 92.06% on rail and fastener, respectively. Model explainability techniques like Grad-CAM and LIME were used to validate the result of the models, where our proposed model ECARRNet was seen to correctly classify and detect the regions of faulty railways effectively compared to the previously existing transfer learning models.

Full article

Figure 1

Open AccessArticle

Visual Analytics in Explaining Neural Networks with Neuron Clustering

by

Gulsum Alicioglu and Bo Sun

AI 2024, 5(2), 465-481; https://doi.org/10.3390/ai5020023 - 05 Apr 2024

Abstract

Deep learning (DL) models have achieved state-of-the-art performance in many domains. The interpretation of their working mechanisms and decision-making process is essential because of their complex structure and black-box nature, especially for sensitive domains such as healthcare. Visual analytics (VA) combined with DL

[...] Read more.

Deep learning (DL) models have achieved state-of-the-art performance in many domains. The interpretation of their working mechanisms and decision-making process is essential because of their complex structure and black-box nature, especially for sensitive domains such as healthcare. Visual analytics (VA) combined with DL methods have been widely used to discover data insights, but they often encounter visual clutter (VC) issues. This study presents a compact neural network (NN) view design to reduce the visual clutter in explaining the DL model components for domain experts and end users. We utilized clustering algorithms to group hidden neurons based on their activation similarities. This design supports the overall and detailed view of the neuron clusters. We used a tabular healthcare dataset as a case study. The design for clustered results reduced visual clutter among neuron representations by 54% and connections by 88.7% and helped to observe similar neuron activations learned during the training process.

Full article

(This article belongs to the Special Issue Machine Learning for HCI: Cases, Trends and Challenges)

►▼

Show Figures

Figure 1

Open AccessArticle

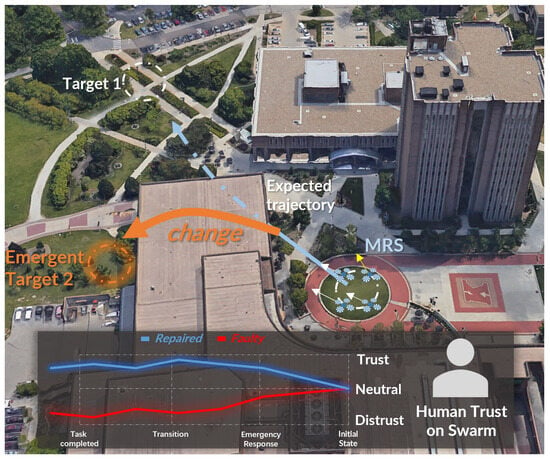

Trust-Aware Reflective Control for Fault-Resilient Dynamic Task Response in Human–Swarm Cooperation

by

Yibei Guo, Yijiang Pang, Joseph Lyons, Michael Lewis, Katia Sycara and Rui Liu

AI 2024, 5(1), 446-464; https://doi.org/10.3390/ai5010022 - 21 Mar 2024

Abstract

►▼

Show Figures

Due to the complexity of real-world deployments, a robot swarm is required to dynamically respond to tasks such as tracking multiple vehicles and continuously searching for victims. Frequent task assignments eliminate the need for system calibration time, but they also introduce uncertainty from

[...] Read more.

Due to the complexity of real-world deployments, a robot swarm is required to dynamically respond to tasks such as tracking multiple vehicles and continuously searching for victims. Frequent task assignments eliminate the need for system calibration time, but they also introduce uncertainty from previous tasks, which can undermine swarm performance. Therefore, responding to dynamic tasks presents a significant challenge for a robot swarm compared to handling tasks one at a time. In human–human cooperation, trust plays a crucial role in understanding each other’s performance expectations and adjusting one’s behavior for better cooperation. Taking inspiration from human trust, this paper introduces a trust-aware reflective control method called “Trust-R”. Trust-R, based on a weighted mean subsequence reduced algorithm (WMSR) and human trust modeling, enables a swarm to self-reflect on its performance from a human perspective. It proactively corrects faulty behaviors at an early stage before human intervention, mitigating the negative influence of uncertainty accumulated from dynamic tasks. Three typical task scenarios {Scenario 1: flocking to the assigned destination; Scenario 2: a transition between destinations; and Scenario 3: emergent response} were designed in the real-gravity simulation environment, and a human user study with 145 volunteers was conducted. Trust-R significantly improves both swarm performance and trust in dynamic task scenarios, marking a pivotal step forward in integrating trust dynamics into swarm robotics.

Full article

Figure 1

Open AccessArticle

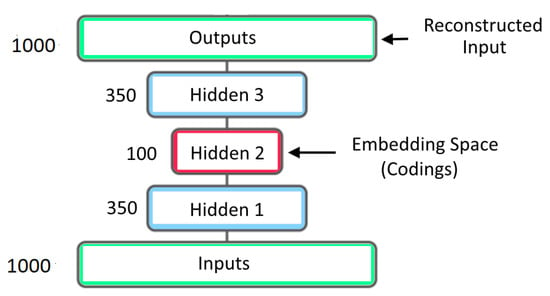

Single Image Super Resolution Using Deep Residual Learning

by

Moiz Hassan, Kandasamy Illanko and Xavier N. Fernando

AI 2024, 5(1), 426-445; https://doi.org/10.3390/ai5010021 - 21 Mar 2024

Abstract

Single Image Super Resolution (SSIR) is an intriguing research topic in computer vision where the goal is to create high-resolution images from low-resolution ones using innovative techniques. SSIR has numerous applications in fields such as medical/satellite imaging, remote target identification and autonomous vehicles.

[...] Read more.

Single Image Super Resolution (SSIR) is an intriguing research topic in computer vision where the goal is to create high-resolution images from low-resolution ones using innovative techniques. SSIR has numerous applications in fields such as medical/satellite imaging, remote target identification and autonomous vehicles. Compared to interpolation based traditional approaches, deep learning techniques have recently gained attention in SISR due to their superior performance and computational efficiency. This article proposes an Autoencoder based Deep Learning Model for SSIR. The down-sampling part of the Autoencoder mainly uses 3 by 3 convolution and has no subsampling layers. The up-sampling part uses transpose convolution and residual connections from the down sampling part. The model is trained using a subset of the VILRC ImageNet database as well as the RealSR database. Quantitative metrics such as PSNR and SSIM are found to be as high as 76.06 and 0.93 in our testing. We also used qualitative measures such as perceptual quality.

Full article

(This article belongs to the Special Issue Artificial Intelligence-Based Image Processing and Computer Vision)

►▼

Show Figures

Figure 1

Open AccessReview

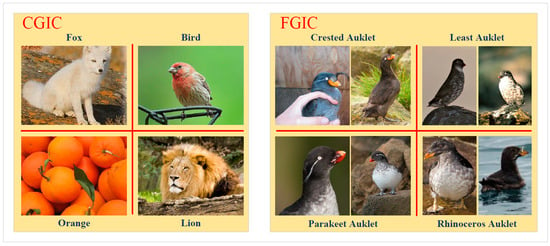

Few-Shot Fine-Grained Image Classification: A Comprehensive Review

by

Jie Ren, Changmiao Li, Yaohui An, Weichuan Zhang and Changming Sun

AI 2024, 5(1), 405-425; https://doi.org/10.3390/ai5010020 - 06 Mar 2024

Abstract

Few-shot fine-grained image classification (FSFGIC) methods refer to the classification of images (e.g., birds, flowers, and airplanes) belonging to different subclasses of the same species by a small number of labeled samples. Through feature representation learning, FSFGIC methods can make better use of

[...] Read more.

Few-shot fine-grained image classification (FSFGIC) methods refer to the classification of images (e.g., birds, flowers, and airplanes) belonging to different subclasses of the same species by a small number of labeled samples. Through feature representation learning, FSFGIC methods can make better use of limited sample information, learn more discriminative feature representations, greatly improve the classification accuracy and generalization ability, and thus achieve better results in FSFGIC tasks. In this paper, starting from the definition of FSFGIC, a taxonomy of feature representation learning for FSFGIC is proposed. According to this taxonomy, we discuss key issues on FSFGIC (including data augmentation, local and/or global deep feature representation learning, class representation learning, and task-specific feature representation learning). In addition, the existing popular datasets, current challenges and future development trends of feature representation learning on FSFGIC are also described.

Full article

(This article belongs to the Special Issue Artificial Intelligence-Based Image Processing and Computer Vision)

►▼

Show Figures

Figure 1

Open AccessReview

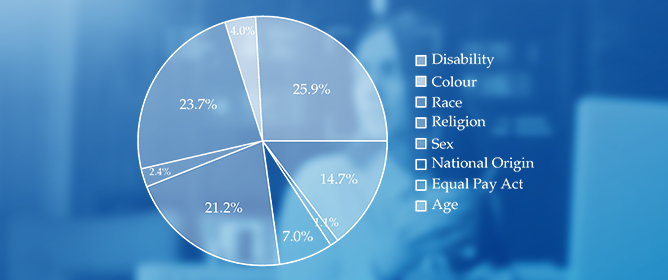

A Comprehensive Review of AI Techniques for Addressing Algorithmic Bias in Job Hiring

by

Elham Albaroudi, Taha Mansouri and Ali Alameer

AI 2024, 5(1), 383-404; https://doi.org/10.3390/ai5010019 - 07 Feb 2024

Abstract

The study comprehensively reviews artificial intelligence (AI) techniques for addressing algorithmic bias in job hiring. More businesses are using AI in curriculum vitae (CV) screening. While the move improves efficiency in the recruitment process, it is vulnerable to biases, which have adverse effects

[...] Read more.

The study comprehensively reviews artificial intelligence (AI) techniques for addressing algorithmic bias in job hiring. More businesses are using AI in curriculum vitae (CV) screening. While the move improves efficiency in the recruitment process, it is vulnerable to biases, which have adverse effects on organizations and the broader society. This research aims to analyze case studies on AI hiring to demonstrate both successful implementations and instances of bias. It also seeks to evaluate the impact of algorithmic bias and the strategies to mitigate it. The basic design of the study entails undertaking a systematic review of existing literature and research studies that focus on artificial intelligence techniques employed to mitigate bias in hiring. The results demonstrate that the correction of the vector space and data augmentation are effective natural language processing (NLP) and deep learning techniques for mitigating algorithmic bias in hiring. The findings underscore the potential of artificial intelligence techniques in promoting fairness and diversity in the hiring process with the application of artificial intelligence techniques. The study contributes to human resource practice by enhancing hiring algorithms’ fairness. It recommends the need for collaboration between machines and humans to enhance the fairness of the hiring process. The results can help AI developers make algorithmic changes needed to enhance fairness in AI-driven tools. This will enable the development of ethical hiring tools, contributing to fairness in society.

Full article

(This article belongs to the Section AI Systems: Theory and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

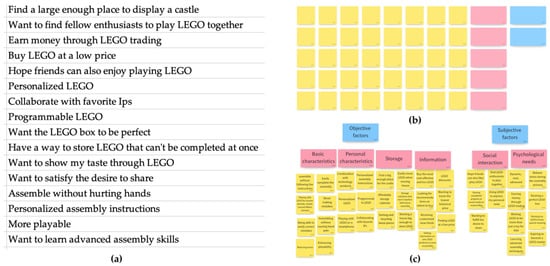

Automated Classification of User Needs for Beginner User Experience Designers: A Kano Model and Text Analysis Approach Using Deep Learning

by

Zhejun Zhang, Huiying Chen, Ruonan Huang, Lihong Zhu, Shengling Ma, Larry Leifer and Wei Liu

AI 2024, 5(1), 364-382; https://doi.org/10.3390/ai5010018 - 02 Feb 2024

Abstract

This study introduces a novel tool for classifying user needs in user experience (UX) design, specifically tailored for beginners, with potential applications in education. The tool employs the Kano model, text analysis, and deep learning to classify user needs efficiently into four categories.

[...] Read more.

This study introduces a novel tool for classifying user needs in user experience (UX) design, specifically tailored for beginners, with potential applications in education. The tool employs the Kano model, text analysis, and deep learning to classify user needs efficiently into four categories. The data for the study were collected through interviews and web crawling, yielding 19 user needs from Generation Z users (born between 1995 and 2009) of LEGO toys (Billund, Denmark). These needs were then categorized into must-be, one-dimensional, attractive, and indifferent needs through a Kano-based questionnaire survey. A dataset of over 3000 online comments was created through preprocessing and annotating, which was used to train and evaluate seven deep learning models. The most effective model, the Recurrent Convolutional Neural Network (RCNN), was employed to develop a graphical text classification tool that accurately outputs the corresponding category and probability of user input text according to the Kano model. A usability test compared the tool’s performance to the traditional affinity diagram method. The tool outperformed the affinity diagram method in six dimensions and outperformed three qualities of the User Experience Questionnaire (UEQ), indicating a superior UX. The tool also demonstrated a lower perceived workload, as measured using the NASA Task Load Index (NASA-TLX), and received a positive Net Promoter Score (NPS) of 23 from the participants. These findings underscore the potential of this tool as a valuable educational resource in UX design courses. It offers students a more efficient and engaging and less burdensome learning experience while seamlessly integrating artificial intelligence into UX design education. This study provides UX design beginners with a practical and intuitive tool, facilitating a deeper understanding of user needs and innovative design strategies.

Full article

(This article belongs to the Topic Machine Learning in Internet of Things)

►▼

Show Figures

Figure 1

Open AccessArticle

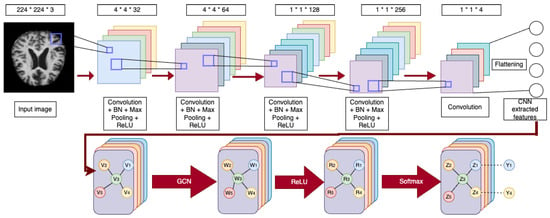

New Convolutional Neural Network and Graph Convolutional Network-Based Architecture for AI Applications in Alzheimer’s Disease and Dementia-Stage Classification

by

Md Easin Hasan and Amy Wagler

AI 2024, 5(1), 342-363; https://doi.org/10.3390/ai5010017 - 01 Feb 2024

Abstract

Neuroimaging experts in biotech industries can benefit from using cutting-edge artificial intelligence techniques for Alzheimer’s disease (AD)- and dementia-stage prediction, even though it is difficult to anticipate the precise stage of dementia and AD. Therefore, we propose a cutting-edge, computer-assisted method based on

[...] Read more.

Neuroimaging experts in biotech industries can benefit from using cutting-edge artificial intelligence techniques for Alzheimer’s disease (AD)- and dementia-stage prediction, even though it is difficult to anticipate the precise stage of dementia and AD. Therefore, we propose a cutting-edge, computer-assisted method based on an advanced deep learning algorithm to differentiate between people with varying degrees of dementia, including healthy, very mild dementia, mild dementia, and moderate dementia classes. In this paper, four separate models were developed for classifying different dementia stages: convolutional neural networks (CNNs) built from scratch, pre-trained VGG16 with additional convolutional layers, graph convolutional networks (GCNs), and CNN-GCN models. The CNNs were implemented, and then the flattened layer output was fed to the GCN classifier, resulting in the proposed CNN-GCN architecture. A total of 6400 whole-brain magnetic resonance imaging scans were obtained from the Alzheimer’s Disease Neuroimaging Initiative database to train and evaluate the proposed methods. We applied the 5-fold cross-validation (CV) technique for all the models. We presented the results from the best fold out of the five folds in assessing the performance of the models developed in this study. Hence, for the best fold of the 5-fold CV, the above-mentioned models achieved an overall accuracy of 43.83%, 71.17%, 99.06%, and 100%, respectively. The CNN-GCN model, in particular, demonstrates excellent performance in classifying different stages of dementia. Understanding the stages of dementia can assist biotech industry researchers in uncovering molecular markers and pathways connected with each stage.

Full article

(This article belongs to the Special Issue Artificial Intelligence in Healthcare: Current State and Future Perspectives)

►▼

Show Figures

Figure 1

Open AccessArticle

Convolutional Neural Networks in the Diagnosis of Colon Adenocarcinoma

by

Marco Leo, Pierluigi Carcagnì, Luca Signore, Francesco Corcione, Giulio Benincasa, Mikko O. Laukkanen and Cosimo Distante

AI 2024, 5(1), 324-341; https://doi.org/10.3390/ai5010016 - 29 Jan 2024

Abstract

Colorectal cancer is one of the most lethal cancers because of late diagnosis and challenges in the selection of therapy options. The histopathological diagnosis of colon adenocarcinoma is hindered by poor reproducibility and a lack of standard examination protocols required for appropriate treatment

[...] Read more.

Colorectal cancer is one of the most lethal cancers because of late diagnosis and challenges in the selection of therapy options. The histopathological diagnosis of colon adenocarcinoma is hindered by poor reproducibility and a lack of standard examination protocols required for appropriate treatment decisions. In the current study, using state-of-the-art approaches on benchmark datasets, we analyzed different architectures and ensembling strategies to develop the most efficient network combinations to improve binary and ternary classification. We propose an innovative two-stage pipeline approach to diagnose colon adenocarcinoma grading from histological images in a similar manner to a pathologist. The glandular regions were first segmented by a transformer architecture with subsequent classification using a convolutional neural network (CNN) ensemble, which markedly improved the learning efficiency and shortened the learning time. Moreover, we prepared and published a dataset for clinical validation of the developed artificial neural network, which suggested the discovery of novel histological phenotypic alterations in adenocarcinoma sections that could have prognostic value. Therefore, AI could markedly improve the reproducibility, efficiency, and accuracy of colon cancer diagnosis, which are required for precision medicine to personalize the treatment of cancer patients.

Full article

(This article belongs to the Special Issue Artificial Intelligence in Healthcare: Current State and Future Perspectives)

►▼

Show Figures

Figure 1

Open AccessReview

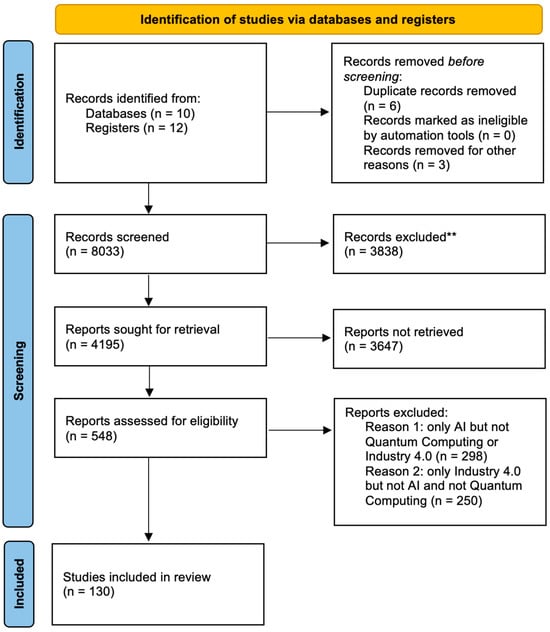

Forging the Future: Strategic Approaches to Quantum AI Integration for Industry Transformation

by

Meng-Leong How and Sin-Mei Cheah

AI 2024, 5(1), 290-323; https://doi.org/10.3390/ai5010015 - 29 Jan 2024

Abstract

►▼

Show Figures

The fusion of quantum computing and artificial intelligence (AI) heralds a transformative era for Industry 4.0, offering unprecedented capabilities and challenges. This paper delves into the intricacies of quantum AI, its potential impact on Industry 4.0, and the necessary change management and innovation

[...] Read more.

The fusion of quantum computing and artificial intelligence (AI) heralds a transformative era for Industry 4.0, offering unprecedented capabilities and challenges. This paper delves into the intricacies of quantum AI, its potential impact on Industry 4.0, and the necessary change management and innovation strategies for seamless integration. Drawing from theoretical insights and real-world case studies, we explore the current landscape of quantum AI, its foreseeable influence, and the implications for organizational strategy. We further expound on traditional change management tactics, emphasizing the importance of continuous learning, ecosystem collaborations, and proactive approaches. By examining successful and failed quantum AI implementations, lessons are derived to guide future endeavors. Conclusively, the paper underscores the imperative of being proactive in embracing quantum AI innovations, advocating for strategic foresight, interdisciplinary collaboration, and robust risk management. Through a comprehensive exploration, this paper aims to equip stakeholders with the knowledge and strategies to navigate the complexities of quantum AI in Industry 4.0, emphasizing its transformative potential and the necessity for preparedness and adaptability.

Full article

Figure 1

Open AccessArticle

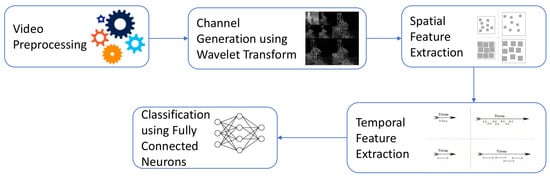

MultiWave-Net: An Optimized Spatiotemporal Network for Abnormal Action Recognition Using Wavelet-Based Channel Augmentation

by

Ramez M. Elmasry, Mohamed A. Abd El Ghany, Mohammed A.-M. Salem and Omar M. Fahmy

AI 2024, 5(1), 259-289; https://doi.org/10.3390/ai5010014 - 24 Jan 2024

Abstract

Human behavior is regarded as one of the most complex notions present nowadays, due to the large magnitude of possibilities. These behaviors and actions can be distinguished as normal and abnormal. However, abnormal behavior is a vast spectrum, so in this work, abnormal

[...] Read more.

Human behavior is regarded as one of the most complex notions present nowadays, due to the large magnitude of possibilities. These behaviors and actions can be distinguished as normal and abnormal. However, abnormal behavior is a vast spectrum, so in this work, abnormal behavior is regarded as human aggression or in another context when car accidents occur on the road. As this behavior can negatively affect the surrounding traffic participants, such as vehicles and other pedestrians, it is crucial to monitor such behavior. Given the current prevalent spread of cameras everywhere with different types, they can be used to classify and monitor such behavior. Accordingly, this work proposes a new optimized model based on a novel integrated wavelet-based channel augmentation unit for classifying human behavior in various scenes, having a total number of trainable parameters of 5.3 m with an average inference time of 0.09 s. The model has been trained and evaluated on four public datasets: Real Live Violence Situations (RLVS), Highway Incident Detection (HWID), Movie Fights, and Hockey Fights. The proposed technique achieved accuracies in the range of

(This article belongs to the Special Issue Artificial Intelligence-Based Image Processing and Computer Vision)

►▼

Show Figures

Figure 1

Open AccessArticle

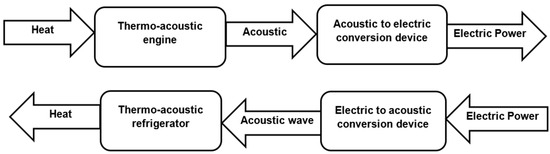

Enhancing Thermo-Acoustic Waste Heat Recovery through Machine Learning: A Comparative Analysis of Artificial Neural Network–Particle Swarm Optimization, Adaptive Neuro Fuzzy Inference System, and Artificial Neural Network Models

by

Miniyenkosi Ngcukayitobi, Lagouge Kwanda Tartibu and Flávio Bannwart

AI 2024, 5(1), 237-258; https://doi.org/10.3390/ai5010013 - 19 Jan 2024

Abstract

►▼

Show Figures

Waste heat recovery stands out as a promising technique for tackling both energy shortages and environmental pollution. Currently, this valuable resource, generated through processes like fuel combustion or chemical reactions, is often dissipated into the environment, despite its potential to significantly contribute to

[...] Read more.

Waste heat recovery stands out as a promising technique for tackling both energy shortages and environmental pollution. Currently, this valuable resource, generated through processes like fuel combustion or chemical reactions, is often dissipated into the environment, despite its potential to significantly contribute to the economy. To harness this untapped potential, a traveling-wave thermo-acoustic generator has been designed and subjected to comprehensive experimental analysis. Fifty-two data corresponding to different working conditions of the system were extracted to build ANN, ANFIS, and ANN-PSO models. Evaluation of performance metrics reveals that the ANN-PSO model demonstrates the highest predictive accuracy (

Figure 1

Open AccessArticle

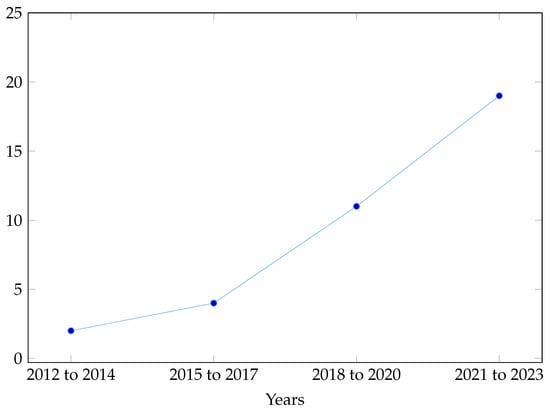

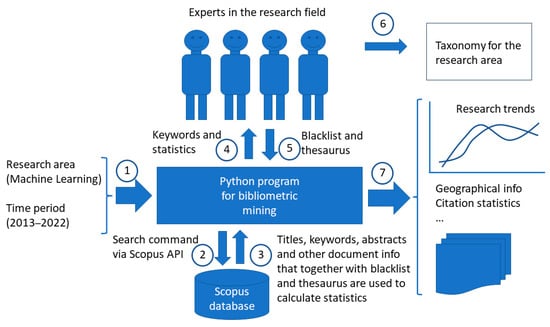

Bibliometric Mining of Research Trends in Machine Learning

by

Lars Lundberg, Martin Boldt, Anton Borg and Håkan Grahn

AI 2024, 5(1), 208-236; https://doi.org/10.3390/ai5010012 - 19 Jan 2024

Cited by 1

Abstract

►▼

Show Figures

We present a method, including tool support, for bibliometric mining of trends in large and dynamic research areas. The method is applied to the machine learning research area for the years 2013 to 2022. A total number of 398,782 documents from Scopus were

[...] Read more.

We present a method, including tool support, for bibliometric mining of trends in large and dynamic research areas. The method is applied to the machine learning research area for the years 2013 to 2022. A total number of 398,782 documents from Scopus were analyzed. A taxonomy containing 26 research directions within machine learning was defined by four experts with the help of a Python program and existing taxonomies. The trends in terms of productivity, growth rate, and citations were analyzed for the research directions in the taxonomy. Our results show that the two directions, Applications and Algorithms, are the largest, and that the direction Convolutional Neural Networks is the one that grows the fastest and has the highest average number of citations per document. It also turns out that there is a clear correlation between the growth rate and the average number of citations per document, i.e., documents in fast-growing research directions have more citations. The trends for machine learning research in four geographic regions (North America, Europe, the BRICS countries, and The Rest of the World) were also analyzed. The number of documents during the time period considered is approximately the same for all regions. BRICS has the highest growth rate, and, on average, North America has the highest number of citations per document. Using our tool and method, we expect that one could perform a similar study in some other large and dynamic research area in a relatively short time.

Full article

Figure 1

Open AccessArticle

Audio-Based Emotion Recognition Using Self-Supervised Learning on an Engineered Feature Space

by

Peranut Nimitsurachat and Peter Washington

AI 2024, 5(1), 195-207; https://doi.org/10.3390/ai5010011 - 17 Jan 2024

Abstract

►▼

Show Figures

Emotion recognition models using audio input data can enable the development of interactive systems with applications in mental healthcare, marketing, gaming, and social media analysis. While the field of affective computing using audio data is rich, a major barrier to achieve consistently high-performance

[...] Read more.

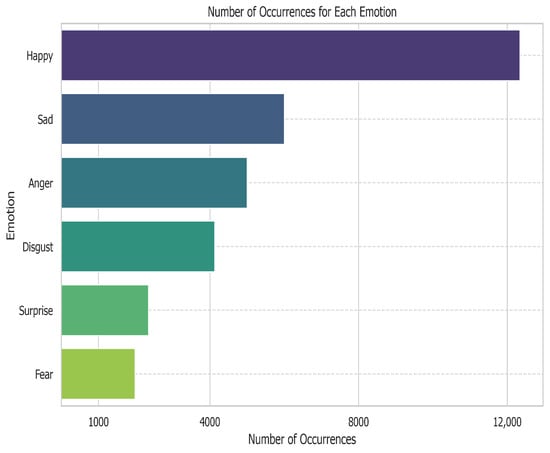

Emotion recognition models using audio input data can enable the development of interactive systems with applications in mental healthcare, marketing, gaming, and social media analysis. While the field of affective computing using audio data is rich, a major barrier to achieve consistently high-performance models is the paucity of available training labels. Self-supervised learning (SSL) is a family of methods which can learn despite a scarcity of supervised labels by predicting properties of the data itself. To understand the utility of self-supervised learning for audio-based emotion recognition, we have applied self-supervised learning pre-training to the classification of emotions from the CMU Multimodal Opinion Sentiment and Emotion Intensity (CMU- MOSEI)’s acoustic data. Unlike prior papers that have experimented with raw acoustic data, our technique has been applied to encoded acoustic data with 74 parameters of distinctive audio features at discrete timesteps. Our model is first pre-trained to uncover the randomly masked timestamps of the acoustic data. The pre-trained model is then fine-tuned using a small sample of annotated data. The performance of the final model is then evaluated via overall mean absolute error (MAE), mean absolute error (MAE) per emotion, overall four-class accuracy, and four-class accuracy per emotion. These metrics are compared against a baseline deep learning model with an identical backbone architecture. We find that self-supervised learning consistently improves the performance of the model across all metrics, especially when the number of annotated data points in the fine-tuning step is small. Furthermore, we quantify the behaviors of the self-supervised model and its convergence as the amount of annotated data increases. This work characterizes the utility of self-supervised learning for affective computing, demonstrating that self-supervised learning is most useful when the number of training examples is small and that the effect is most pronounced for emotions which are easier to classify such as happy, sad, and angry. This work further demonstrates that self-supervised learning still improves performance when applied to the embedded feature representations rather than the traditional approach of pre-training on the raw input space.

Full article

Figure 1

Open AccessArticle

Secure Internet Financial Transactions: A Framework Integrating Multi-Factor Authentication and Machine Learning

by

AlsharifHasan Mohamad Aburbeian and Manuel Fernández-Veiga

AI 2024, 5(1), 177-194; https://doi.org/10.3390/ai5010010 - 10 Jan 2024

Abstract

►▼

Show Figures

Securing online financial transactions has become a critical concern in an era where financial services are becoming more and more digital. The transition to digital platforms for conducting daily transactions exposed customers to possible risks from cybercriminals. This study proposed a framework that

[...] Read more.

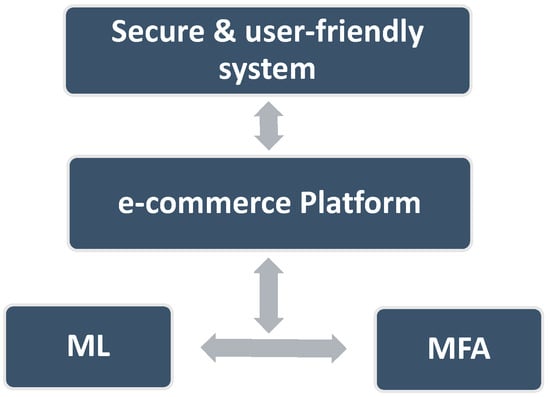

Securing online financial transactions has become a critical concern in an era where financial services are becoming more and more digital. The transition to digital platforms for conducting daily transactions exposed customers to possible risks from cybercriminals. This study proposed a framework that combines multi-factor authentication and machine learning to increase the safety of online financial transactions. Our methodology is based on using two layers of security. The first layer incorporates two factors to authenticate users. The second layer utilizes a machine learning component, which is triggered when the system detects a potential fraud. This machine learning layer employs facial recognition as a decisive authentication factor for further protection. To build the machine learning model, four supervised classifiers were tested: logistic regression, decision trees, random forest, and naive Bayes. The results showed that the accuracy of each classifier was 97.938%, 97.881%, 96.717%, and 92.354%, respectively. This study’s superiority is due to its methodology, which integrates machine learning as an embedded layer in a multi-factor authentication framework to address usability, efficacy, and the dynamic nature of various e-commerce platform features. With the evolving financial landscape, a continuous exploration of authentication factors and datasets to enhance and adapt security measures will be considered in future work.

Full article

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

12 April 2024

Meet Us at the ISPRS TC I Mid-term Symposium on Intelligent Sensing and Remote Sensing Application (ISPRS 2024 TC I), 13–17 May 2024, Changsha, China

Meet Us at the ISPRS TC I Mid-term Symposium on Intelligent Sensing and Remote Sensing Application (ISPRS 2024 TC I), 13–17 May 2024, Changsha, China

Topics

Topic in

AI, Algorithms, BDCC, Future Internet, Informatics, Information, Languages, Publications

AI Chatbots: Threat or Opportunity?

Topic Editors: Antony Bryant, Roberto Montemanni, Min Chen, Paolo Bellavista, Kenji Suzuki, Jeanine Treffers-DallerDeadline: 30 April 2024

Topic in

AI, Computation, Mathematics, Risks, Sustainability

Artificial Intelligence and Machine Learning in Accounting and Finance: Theories and Applications

Topic Editors: Xinwei Cao, Tran Thu Ha, Dunhui Xiao, Vasilios N. Katsikis, Ameer Hamza Khan, Shuai LiDeadline: 31 May 2024

Topic in

AI, Applied Sciences, Buildings, CivilEng, Mathematics, Symmetry, Water

Artificial Intelligence (AI) Applied in Civil Engineering, 2nd Volume

Topic Editors: Nikos D. Lagaros, Stelios K. Georgantzinos, Denis IstratiDeadline: 30 June 2024

Topic in

Applied Sciences, Cancers, Cells, Electronics, AI

Explainable AI for Health

Topic Editors: Yudong Zhang, Juan Manuel Gorriz, Zhengchao DongDeadline: 8 August 2024

Conferences

Special Issues

Special Issue in

AI

AI in Finance: Leveraging AI to Transform Financial Services

Guest Editor: Xianrong (Shawn) ZhengDeadline: 30 June 2024

Special Issue in

AI

AI and the Evolution of Work: Redefining Project Management across Disciplines

Guest Editor: Jose BerengueresDeadline: 15 July 2024

Special Issue in

AI

Artificial Intelligence in Agriculture

Guest Editor: Arslan MunirDeadline: 31 July 2024

Special Issue in

AI

Intelligent Systems for Industry 4.0

Guest Editors: Sharifu Ura, Angkush Kumar GhoshDeadline: 31 August 2024